What might the world look like a century from now? This is not an idle question: if we were able to forecast the ultra-long-term future with at least some degree of accuracy, our approach to policymaking would be very different. For example, if we consider federally funded R&D since World War II, much of it looks like a random walk, albeit one down a not-so-gentle incline. But now imagine that, back in 1950, America’s top forecasters had universally predicted that the world would somehow be electronically interconnected by 2000, and that what they referred to as “cyber-warfare” and “cyber-espionage” would pose an unprecedented (and unanticipated) threat to U.S. socio-economic security. How then might the U.S. have chosen to allocate its R&D dollars over the ensuing half century?

What might the world look like a century from now? This is not an idle question: if we were able to forecast the ultra-long-term future with at least some degree of accuracy, our approach to policymaking would be very different. For example, if we consider federally funded R&D since World War II, much of it looks like a random walk, albeit one down a not-so-gentle incline. But now imagine that, back in 1950, America’s top forecasters had universally predicted that the world would somehow be electronically interconnected by 2000, and that what they referred to as “cyber-warfare” and “cyber-espionage” would pose an unprecedented (and unanticipated) threat to U.S. socio-economic security. How then might the U.S. have chosen to allocate its R&D dollars over the ensuing half century?

For policymakers, the ability to act in response to accurate long-term forecasts would be transformative. Setting policy on a steady, multi-decade path would enable governments at every level to break free of today’s reactive, two-steps-forward, one-step-back(or sideways) pattern of policymaking. Rather than simply talking about the long-term consequences of their actions (think energy policy today), it would enable them to adopt truly proactive and strategic approaches to, for example, city, transportation and environmental development. The potential socio-economic benefits would be immense.

The challenge, of course, is that ultra-long-term forecasting has always been somewhere between notoriously difficult and impossible. In 1900, for instance, the German company Hildebrand attempted to predict life in the year 2000: it saw a world in which people flew with the help of mechanical wings and walked on water aided by personal balloons; a world where entire cities were built under gigantic roofs to protect them from the elements; and skies crowded with airships. Oddly, however, Hildebrand thought that how people dressed would remain unchanged during the ensuing century.

It’s easy to mock Hildebrand’s lack of prophetic prowess. Yet more than a century later, in an era of big data, advanced analytics and machine learning, we still find it hard to figure out next week’s weather, never mind what the world might look in 2113 (or, for that matter, a mere ten years’ out in 2023).So why does even modestly long-term forecasting continue to remain so far beyond our grasp?

At the heart of our inability to think long-term is our tendency to view the future through the lens of the present—regardless of how far ahead we are peering. For the most part we (and the majority of forecasting models, whatever their designers claim) simply extrapolate in a more or less straight line: we see more powerful cellphones, more economical cars, more pervasive social networking… essentially improved versions of whatever we have today. And legislators develop policies that at best reflect those modest extrapolations, and at worst remain mired in the present—or even the past. U.S Spectrum policy over the past few decades is illustrative of the latter approach, as is the General Data Protection Regulation (GDPR) proposed by the European Union last year. As drafted, the GDPR signally failed to comprehend how individuals now interact with the Internet, and also completely missed developments such as big data. It is currently in the process of being amended to death.

Nor is industry immune from present-day bias. When Ford’s market-leading Taurus first came under sustained attack from Honda’s Accord, it asked existing Taurus buyers what they wanted from the next-generation car. Their answer: more of the same, only better—but still a Taurus. Honda, by contrast, asked a diverse group of people to envision their ideal mid-size sedan. Freed from the tyranny of the present, their dreams helped shape a new-generation Accord that swiftly trounced all of its competitors.

Today, however, Honda is in the same spot Ford was in a quarter-century ago, with no answer to the novel design language and hyper-efficient manufacturing that Hyundai is using to challenge both its and Toyota’s mass-market dominance. Ford, meanwhile, has finally freed its own product planners and designers from the constraints of its past, and is starting to successfully take on Honda at its own game.

The lesson for policymakers (not to mention automakers) is simple: don’t extrapolate, envision. Step outside the present and consider the potential implications of trends and ideas that today seem barely conceivable. What might a quantum economy look like? What if 3D printers could manufacture almost everything? What if the technological singularity occurs? What if many of the products we use consist of matter that is programmable? Then work back from those currently implausible scenarios and consider the potential social, economic, technological and other implications. Do the policies being pursued today at least notionally factor in any of those consequences? Should they?

For example, quantum computers will be able to crack many of today’s common encryption algorithms (e.g., asymmetric algorithms, such as RSA, and also elliptic curve algorithms) with dismissive ease. True quantum machines don’t yet exist, but they may in as little as 20 years, which means we need to start rethinking our online security architectures now—a Sisyphean task for both industry and policymakers. Similarly, we will also need to reshape economic—and in particular industrial—policy quite swiftly if 3D printers become substitutes for factories (perhaps with the help of advanced materials developed by quantum computers). Considering such scenarios is at the heart of strategic foresight—and genuinely long-term policy development.

But stepping outside the present is extremely difficult. In particular, creating scenarios that contain no elements of the present, or envisioning “alternative worlds” that turn present-day assumptions on their heads, feels like the province of fiction rather than forecasting. Which is why many futurists look to science fiction to free their thinking from present-day bias.

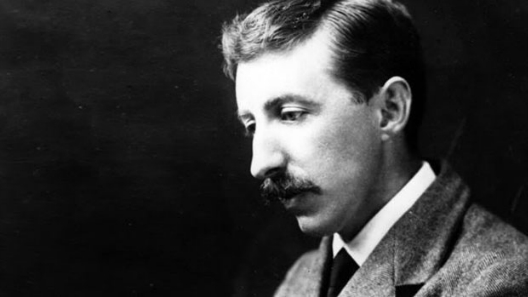

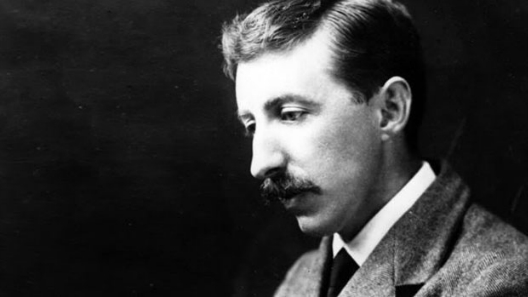

More than a century ago one novelist in particular tried his hand at forecasting the future, and got it spectacularly right. In his relatively obscure short story “The Machine Stops,” E.M. Forster envisioned a future in which every need is met by The Machine, an all-powerful electro-mechanical god worshipped by those in its care. The Machine cossets the Earth’s now-subterranean inhabitants—including Vashti, the subject of Forster’s tale—in the safety of womb-like rooms they need never leave:

Imagine, if you can, a small room, hexagonal in shape, like the cell of a bee. It is lighted neither by window nor by lamp, yet it is filled with a soft radiance. There are no apertures for ventilation, yet the air is fresh. There are no musical instruments, and yet, at the moment that my meditation opens, this room is throbbing with melodious sounds. … There were buttons and switches everywhere—buttons to call for food for music, for clothing. … There was the button that produced literature. And there were of course the buttons by which she communicated with her friends. The room, though it contained nothing, was in touch with all that she cared for in the world.

It’s impossible not to marvel at Forster’s foresight. Writing in 1909, he envisioned many world-changing technologies we now take for granted—global air travel, the Internet, teleconferencing, massive online open courses (MOOCs), social networking—with stark clarity; he also predicted the potentially negative side-effects of communicating without physically connecting, and of over-dependence on technology.

For anyone seeking to peer far beyond the horizon, “The Machine Stops” is essential late-summer reading. And for policymakers in particular, it hints at how accurate strategic foresight might enable truly transformative long-term policies—assuming those policies are not under the aegis of authorities as short-sighted and paranoid as those in Forster’s novella.

Peter Haynes is a senior fellow for the Atlantic Council’s Strategic Foresight Initiative and senior director, advanced strategies and research at Microsoft Corporation