WASHINGTON—The recent standoff between Anthropic and the Pentagon over terms of use for the company’s artificial intelligence (AI) models has thrust the role of AI in military and intelligence operations into the national dialogue. As the Pentagon’s contract negotiations with Anthropic broke down and it designated the company a supply chain risk earlier this month, the episode exposed the fraying social contract among leading AI companies, the federal government, and the American public over responsible AI use.

How Americans view AI

Anthropic’s red lines in the negotiations centered on two issues: the use of its models for the mass surveillance of US citizens and in autonomous weapons. Both topics resonate with an American public that remains deeply skeptical of the technology. A 2025 poll conducted by Gallup and the Special Competitive Studies Project found that 60 percent of Americans distrust AI somewhat or fully. This stands in contrast to much of the rest of the world. According to Stanford’s annual AI Index, large majorities in China, Indonesia, and Thailand (75-80 percent) believe AI-powered products offer more benefits than drawbacks. In the United States, that number is a meager 39 percent.

Several factors drive this skepticism. Safety concerns, including fears related to AI-driven psychosis and AI-enabled teen suicides, feature prominently in public discourse, as do worries about the technology’s environmental footprint and its impact on jobs. Search “AI and water” on Instagram and you’ll be flooded with posts from influencers calling on followers to boycott AI over the energy and water demands of the data centers powering it. Recent mass layoffs, such as fintech company Block’s decision to cut 40 percent of its workforce due to the integration of AI into the company’s workflows, have amplified fears around broader workforce contractions. Some studies have extrapolated from initial data around AI adoption to suggest that the technology will create more jobs than it eliminates, but much of the public discussion has focused on the prospect of significant job losses on the horizon, raising anxiety among white-collar workers.

This unease with AI is increasingly visible in politics. More than 1,500 AI-related bills have been introduced in state legislatures in 2026 alone, many focused on protecting consumers and minors from AI-related harms. AI skepticism has come from both sides of the aisle. Data centers have drawn criticism from left-leaning environmental advocates and from deep-red communities alike. A study found that twenty data center projects were blocked in the second quarter of 2025 due to local opposition, representing $98 billion in stalled investment. This year, Democratic and Republican lawmakers have begun backing away from data center investments that they recently championed. At least six Democratic governors used their state of the state addresses to announce plans to roll back incentives or impose new regulations on data centers. And Democratic lawmakers in New York and Maine, as well as Republican lawmakers in Oklahoma, are calling for temporary bans.

The Trump administration’s approach to AI

The second Trump administration has made AI a national priority from the outset. Just three days after his inauguration, US President Donald Trump issued the first of seven executive orders related to AI released in 2025, which signaled the administration’s intent to “sustain and enhance America’s global AI dominance in order to promote human flourishing, economic competitiveness, and national security.” The order set the tone for the administration’s follow-on actions, including a foundational AI Action Plan that positioned the United States as going all-in on AI against the backdrop of a rising global competition with China. So far, the administration has expanded AI education opportunities, worked to harness AI for science, accelerated permitting for data center construction, and attempted to prevent states from passing laws regulating AI.

Yet, even before the Anthropic-Pentagon controversy, tension between the administration’s position on AI and its own political base were surfacing. Upon the release of the AI Action Plan in July 2025, former US Representative Marjorie Taylor Greene issued a pointed rebuke. She warned that “competing with China does not mean become like China by threatening state rights, replacing human jobs on a mass scale, creating mass poverty, and resulting in potentially devastating effects on our environment and critical water supply.” The administration’s push to preempt and pause future state laws regulating AI was defeated twice in Congress prior to being advanced by executive order in December 2025. The original congressional campaigns incurred widespread pushback from across the political spectrum, including a request to remove the legislative provision, which was signed by seventeen Republican governors.

Recent announcements suggest the administration is beginning to recognize public resistance. In his State of the Union address, Trump introduced a ratepayer protection pledge that calls on technology companies to commit to covering the cost of increased energy production to support the build-out of data centers. This is intended to prevent those costs from being passed on to local communities. Seven of the largest players in AI have since signed on. A National Policy Framework on AI released at the end of last week reaffirms this push and lays out the administration’s legislative priorities for the technology, including enhanced safeguards for children, increased action to combat AI-enabled scams, and protections for individuals against unauthorized distribution of AI-generated voice or image likenesses.

Despite these moves, the administration’s handling of the Anthropic standoff has intensified debates in public and within the tech sector around the dangers of AI and the necessity of building guardrails for responsible use. The administration’s maximalist position that contracts with AI companies should provide flexibility for the government to employ AI for “all lawful uses” runs counter to US public opinion. Indeed, 80 percent of US adults believe the government should maintain rules for AI safety and data security, even if doing so slows development.

Public distrust on display

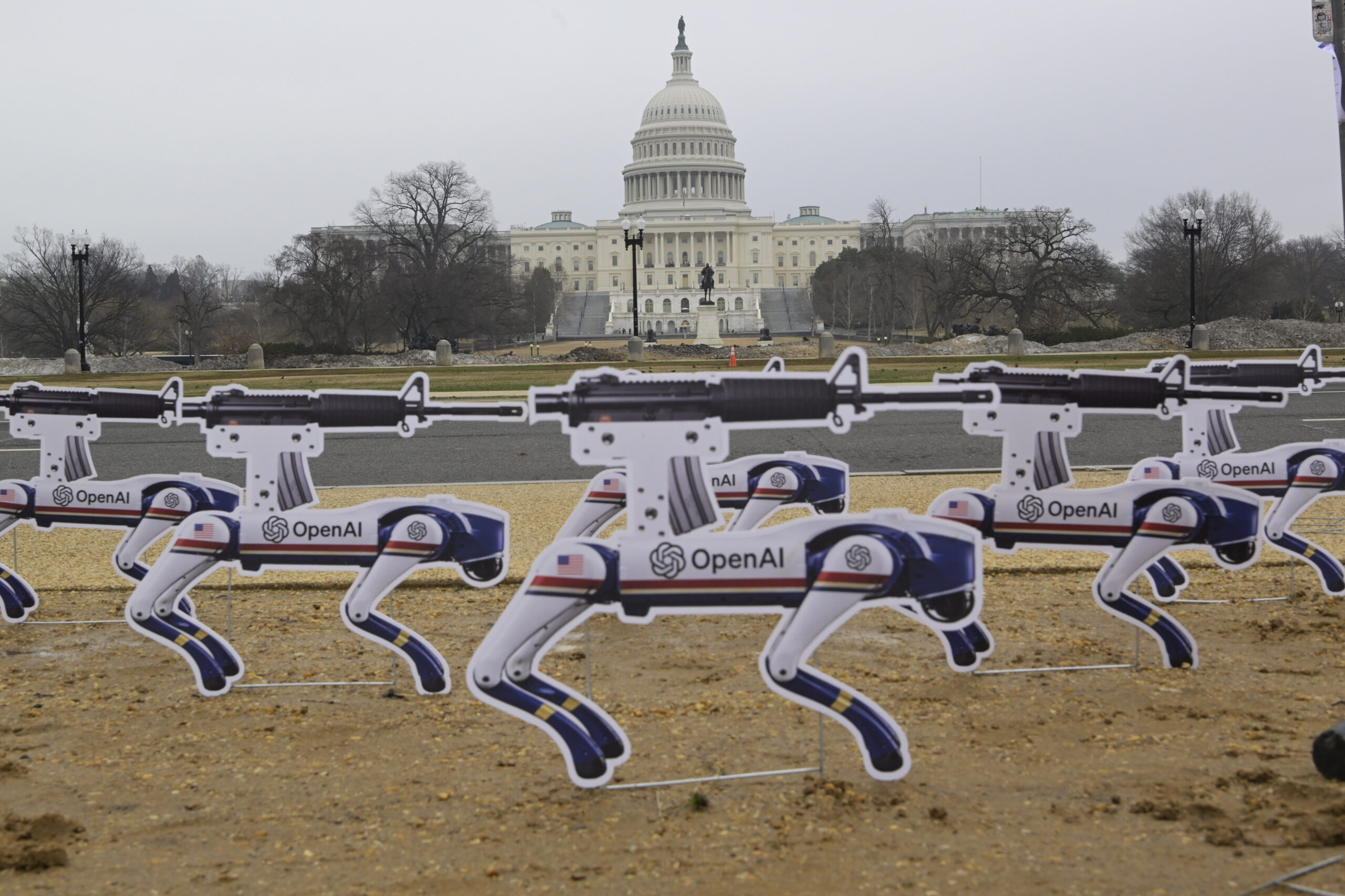

Following OpenAI CEO Sam Altman’s announcement on February 27 that the company had signed a deal with the Pentagon that it claimed contained the same provisions that Anthropic had been fighting for, public and private reactions were swift, with many skeptical of the company’s claims. Uninstalls of the ChatGPT app jumped 295 percent overnight and a #QuitGPT campaign gained steam on social media. Some OpenAI employees publicly criticized their company’s stance and OpenAI’s hardware lead resigned in protest.

Anthropic, meanwhile, filed suit, contesting the Pentagon’s designation of the company as a supply chain risk following the inability of the company and the Pentagon to reach an agreement on contractual terms. The case has attracted amicus briefs from a wide range of groups, including tech sector workers, Catholic theologians and ethicists, and the American Civil Liberties Union. A brief signed by a group of almost forty employees from Google and OpenAI, including Google’s chief scientist, affirmed a shared belief in the risks underpinning Anthropic’s contractual red lines. Their brief noted the dangers to US democracy posed by AI-enabled surveillance and warned that today’s AI systems are too immature to be relied on for use in lethal autonomous weapons.

While the immediate controversy may be fading, the episode has already provided a revealing window into US sentiment around AI and the ongoing litigation will keep the issue in public focus. A poll conducted by NBC News this month after the standoff found that 57 percent of registered voters believe the risks of AI outweigh its benefits.