For years, Facebook has quietly and very intentionally inserted itself into the daily lives of its users. It has succeeded wildly, becoming arguably the world’s most ubiquitous communication platform, with an average of 1.28 billion daily users. But now that it has become one of the world’s most popular sources of news, Facebook is failing to take real responsibility for that exceptional position in order to counter the spread of disinformation.

For years, Facebook has quietly and very intentionally inserted itself into the daily lives of its users. It has succeeded wildly, becoming arguably the world’s most ubiquitous communication platform, with an average of 1.28 billion daily users. But now that it has become one of the world’s most popular sources of news, Facebook is failing to take real responsibility for that exceptional position in order to counter the spread of disinformation.

After being criticized as tone deaf for not recognizing the role that fake news shared on Facebook played in the 2016 US presidential election, the company released a white paper on “Information Operations and Facebook” in April 2017. It explains how hostile actors seek to undermine Facebook’s mission to “give people the power to share and make the world more open and connected,” through what it calls false amplifiers (trolls), targeted data collection, and false content creation. But, the company writes, it is “in a position to help constructively shape the emerging information ecosystem by ensuring [Facebook] remains a safe and secure environment for authentic civic engagement.”

As laid out in the paper, the company’s response includes the use of algorithms to locate and disable fake accounts, (of which 30,000 were deleted prior to the French presidential election), marking fake news as “disputed” in users’ feeds, working with political campaigns and high-risk individuals to keep personal data secure, educating the public about fake news, and supporting civil society programs focused on media literacy.

Facebook’s plans do not go far enough. Identifying and neutralizing troll accounts is important to stop the spread of a false story, but it is not a panacea: research shows that the more often fake news is repeated, the less likely people are to question it. So by the time “false amplifiers” are deleted, the damage may already be done. And deleting fake accounts won’t do much to stop Russia or other hostile actors from creating thousands more to replace them.

Furthermore, in its early efforts to debunk disinformation, Facebook seems to have driven more traffic to disputed stories. Psychologists also debate the utility of debunking as a whole; the “continued influence effect” demonstrates that false information affects people’s thinking even after it has been proven untrue. Rather than simply marking a story “disputed,” Facebook should call a spade a spade and change that moniker to “false.” Then it should use its considerable technological capacity to ensure that stories known to be untrue are not further promoted.

Facebook’s more substantive responses to the fake news phenomenon—its plans to support education, media literacy, and journalism (as part of the Facebook Journalism Project)—also ring hollow. While the initiative is undoubtedly still in development, it seems to be replete with buzzwords but few concrete solutions.

Facebook says it will “work with partners to evolve our current [storytelling] formats” to make them work better for news outlets and users. This is a fancy way of describing new product development, which most likely would have occurred regardless of the fake news phenomenon. These new tools would largely benefit existing media organizations with a wide reach. Independent outlets and nongovernmental organizations, even when equipped with new tech toys, are still subject to the whims of Facebook’s algorithm, which rewards sensationalist, attention-grabbing titles, and punishes diligent and potentially mundane reporting. Facebook should consider giving a boost to independent media organizations dedicated to truthful investigative reporting, either by deliberately prioritizing them in the algorithm or creating a grant program that allows these organizations free or reduced-cost Facebook advertising.

Facebook’s educational efforts, both those described in its white paper as well as those already launched, do not present a vision for advanced media literacy. They heavily center on debunking, amounting to print ads and a page in Facebook’s help center listing tips to identify fake news. I have written before about why fact-checking is not the answer to the world’s disinformation ills, so it is discouraging to see Facebook’s first efforts so concentrated on labeling stories true or false.

But Facebook’s yet-to-be-unveiled partnership with the News Literacy Project, which will “include videos and other multimedia elements familiar to Facebook users [to] raise awareness of the importance of being a skeptical and responsible consumer of news and information” is encouraging, albeit light on details. Will these PSAs be delivered in multiple languages or targeted to populations more likely to consume disinformation? How will they encourage interaction before users scroll past the ad to the next cat video in their feed? How will they avoid being labeled partisan in an increasingly polarized media environment?

People have the right to share whatever they like online. But if Facebook embraces its role as a responsible news organization, not just an abyss into which anyone can not only shout profanities but lob highly dangerous bombs, there may be hope for us yet.

Nina Jankowicz is a 2016-2017 Fulbright-Clinton Public Policy Fellow in Ukraine, where she conducts research on anti-disinformation activities across Europe. The views presented here are her own. She tweets @wiczipedia.

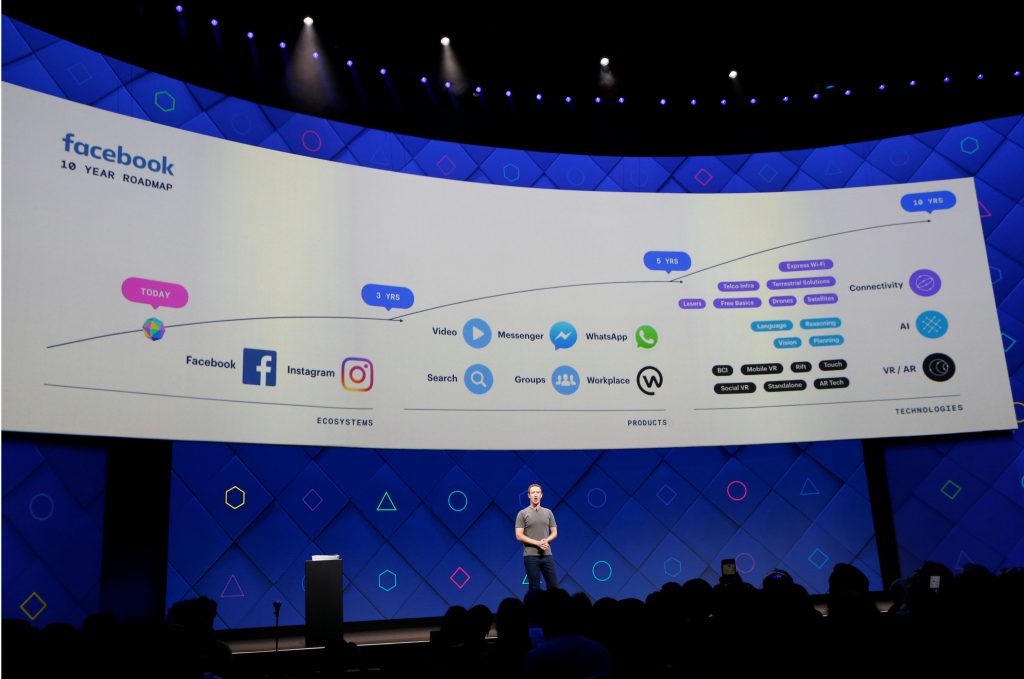

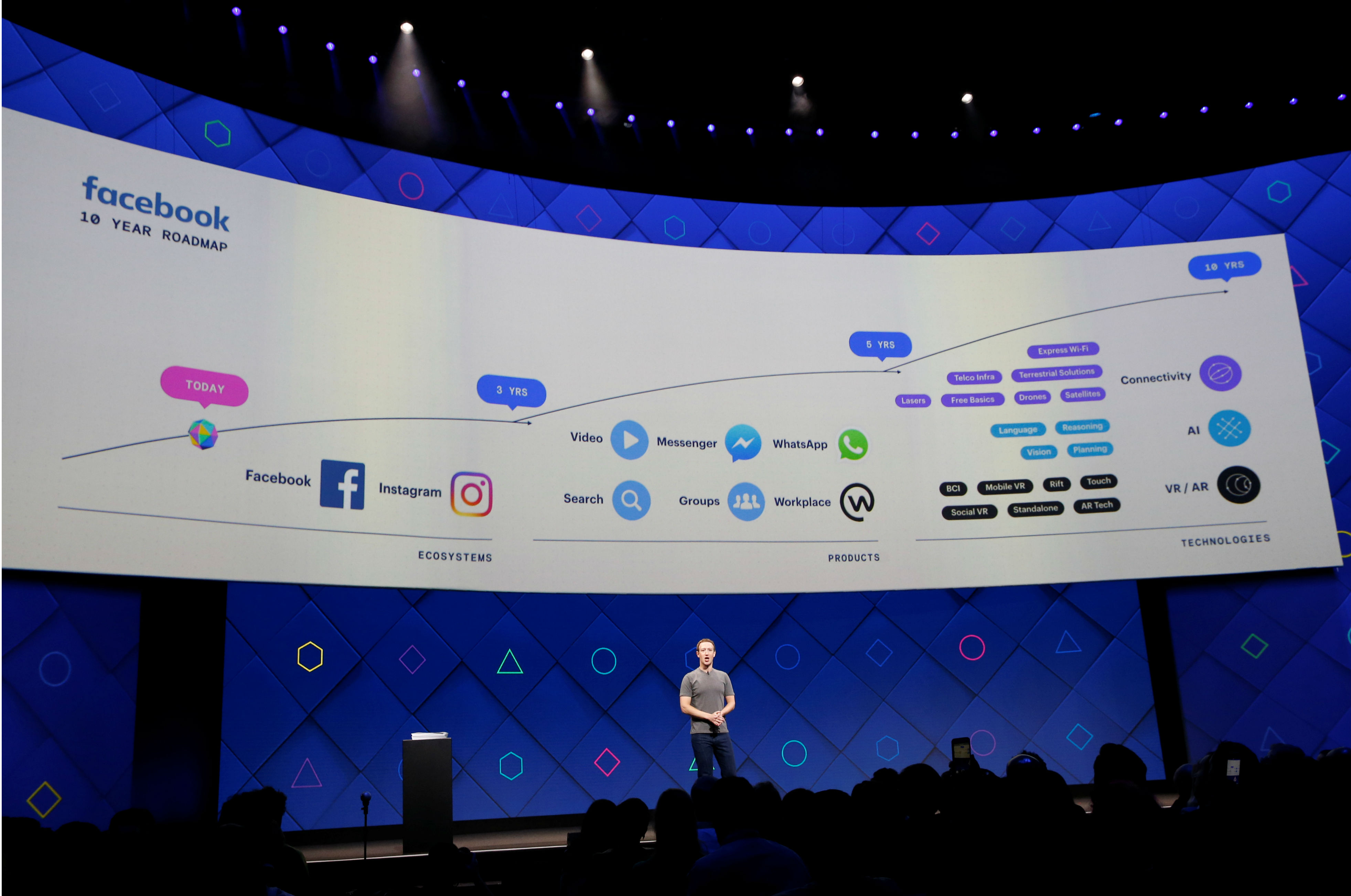

Image: Facebook Founder and CEO Mark Zuckerberg speaks on stage during the annual Facebook F8 developers conference in San Jose, California, U.S., April 18, 2017. REUTERS/Stephen Lam