Buying down risk: Container security

Containers are a form of virtualization that provides distinct advantages over more traditional virtual machine (VM) architecture. Although every VM has its own operating systems, containers do away with this added overhead, instead providing only the dependencies necessary for a specific application to run, sitting directly on a host server’s OS. The two designs are not mutually exclusive, as containers can be built on top of virtual machines too. Containers lend themselves to increased segregation of services, file systems, input/output streams, and more.

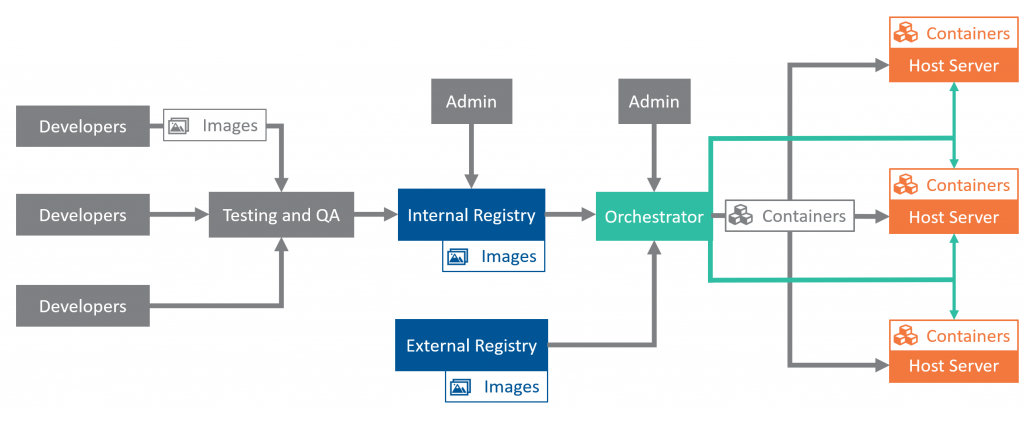

Containers have unique design and development requirements. Every instance of a container is created from a static image, which is stored in a registry. When containers fail or need rebuilding, they are reconstructed from the image. To update containers, developers first update an image and then push the new version to the registry. In environments running many containers at once, orchestrators coordinate the building and allocation of resources among containers, as well as container-to-container communication. Orchestrators typically sit between registries and the servers that host containers, converting images into containers according to workload needs and available resources while acting as the go-to coordinator for the system’s disparate parts. An additional layer of servers, called master nodes, might sit between the orchestrator and groups of host servers, facilitating communication among containers, host servers, and the orchestrator.

Benefits of containerization

Containers provide several performance and security benefits. Because they contain all the requisite dependencies for the software they run, containers are extremely portable. They are generally less resource-intensive than VMs because containers do not require an entire OS, making the creation of a new container instance easier. These attributes make containers extremely well suited to and thus popular across the many flavors of cloud services. Registries provide a natural chokepoint in the software development process: images must first upload to registries before percolating out into updated containers, so strict, automated reviews can enhance container quality downstream. Moreover, the registry system and clear insight into container contents and versioning allow for fine-toothed control over patching. System administrators can choose to let updated containers percolate through a network naturally, to schedule batches of containers for updates, or to reinitialize all containers at once. Implemented well, this process can ensure service continuity and provide a more agile update schedule.

Security in a containerized world

Container architecture has several key security considerations. Registries and image repositories are attractive, centralized targets for attackers seeking to corrupt container instances. Ensuring the integrity of images, both when added to registries and when built into containers, is critical. Moreover, developers often rely on popular container images distributed across repositories, opening an avenue for supply-chain attacks reminiscent of exploits of popular open source packages. Attackers with access to a container want to move into other environments, requiring strict segregation for security. Because containers can sit directly on the OS of their server without the separation provided for VMs by the hypervisor, an attacker may try to move down into the server to gain access to all its containers. The attacker could also move upward in the system hierarchy toward either the orchestrator or the intermediary servers that coordinate between orchestrator and container servers (also called worker nodes).

Containers lend themselves excellently to the dynamic workloads and agile development of cloud systems such as AWS and Azure. Yet, Unit 42, the cybersecurity consultant arm of Palo Alto Networks, found vulnerabilities in those systems resulting from container architecture, as have other researchers. During their analysis of Azure’s container-as-a-service (CaaS) offering, Azure Container Instances (ACI), Unit 42 analysts managed to deploy a malicious container to ACI and escape the container environment to run as root on their host node. From there, the analysts spread to other nodes through the master node sitting above their host, abusing quirks in node communication with the master. More broadly, Red Hat Foundation research on common container systems found that 94 percent of users of one of the most common orchestrators, Kubernetes, reported experiencing security incidents, with a third of those reporting major vulnerabilities and 47 percent reporting fears of exposure due to misconfiguration.

Recommendations

- Voluntary codes of practice: Key industry players should develop, publicize, and adopt voluntary best practices for container security. These codes of practice might eventually develop into acquisition requirements and certifications. The largest cloud providers—Microsoft, Google, and AWS—are well situated to develop these standards in collaboration with container technology entities like Kubernetes and Docker. NIST Special Publication 800-190 “Application Container Security Guide” and the “Security Guidance for 5G Cloud Infrastructures” series published jointly by CISA and the National Security Agency (NSA) serve as useful starting points, providing crucial security practices at the technical and administrative levels. Meanwhile, the Cloud Native Computing Foundation (CNCF), a Linux Foundation project focused on fostering the development of cloud-native open source projects, is already well positioned to coordinate an industry-led effort with membership from Microsoft, AWS, Oracle, Cisco, SAP, and oversight for dozens of the most critical cloud and container technologies. Industry input should provide more detailed and practical technical approaches to container security and recommend tooling needs. Other industry input should include:

- standards for vetting both image integrity and origin as well as for registry management and security,

- best practices for cross-container communication and the development-to-registry pipeline, and

- standards for providing configuration best practices and resources to customers. This last point is particularly critical because containerization is part of the shared-responsibility paradigm in cloud service provision. If vendors do not make security transparent and straightforward for users, the consequences could easily spiral outside the bounds of a single compromised environment. Recent attacks on Docker containers to deploy cryptocurrency mining malware aptly illustrate the dangers of misconfiguration, as do the results of the aforementioned Red Hat Foundation survey. Codes of practice must include sections specific to empowering users of container services with relevant security tooling and configuration guidance.

- Leverage customers’ buying power: Although the purveyors of critical, container-enabled technologies can voluntarily adopt codes of practice, their main customers have equal power and responsibility to demand such assurances from vendors as a condition of doing business. Large corporate entities not usually considered cybersecurity vendors—major investment and commercial banks, retail companies, and manufacturing firms—should require that their IT vendors establish and adhere to the set of standards and practices discussed above in order to inspire a baseline of customer confidence, using their immense buying power as leverage to better secure their own systems and networks while also improving the ecosystem as a whole. Likewise, the federal government should use its acquisition security levers—FedRAMP and applicable DOD processes—to further incentivize these codes of practice.

- Secure critical open source container infrastructure: Most container systems rely on just a handful of orchestrator, registry, and image technologies—many of which, including Kubernetes and Docker, are open source. Many proprietary service offerings depend on these linchpin technologies, yet RedHat found that in 2021, 94 percent of survey respondents still encountered security incidents in their container environments. As more cloud and computing systems rely on containerized environments, the security of those underlying infrastructures will become increasingly critical.

- Industry, including Microsoft, Google, IBM, and Amazon, should commit resources to the development of security tooling for container environments, particularly automated configuration-management tools—misconfiguration accounted for 60 percent of security incidents in the aforementioned survey. The CNCF is already linked to many of these tooling projects, should serve as the conduit between projects and enterprise-sourced resources. Google’s recent submission of its Knative tool for Kubernetes to the CNFC illustrates a viable path for the incubation and provision of marquee industry tooling. Industry, CISA, and the NSA can collaborate to identify additional tooling useful for adhering to the security practices recommended in the jointly published “Security Guidance for 5G Cloud Infrastructures” as well.

- CISA should identify Kubernetes and similar services as critical linchpin technologies in line with the language in Executive Order 14028 and increase resource commitments as needed. Cloud-services security does not need to be limited to products as used but should address technologies as designed and deployed. In this area, container management looms large.

- The establishment of Critical Technology Security Centers (CTSCs) added to the House-passed COMPETES ACT (HR 4521) offers a model for federal outreach to open-source container components. The provisions would create at least four CTSCs for the security of network technologies, connected industrial control systems, open source software, and federal critical software. These CTSCs would work with the input of the DHS Under Secretary of Science and Technology and the Director of CISA to study, test the security of, coordinate community funding for, and generally support CISA’s work regarding their respective technologies. The Center for Open Source Software Security should work with CISA to coordinate the protection of critical open source container infrastructure.

- Establish an architectural resilience review process for service of services: The continued containerization of cloud systems and other services will speed up change in already dynamic environments, improving innovation and development. However, that swiftness can compromise long-term architectural planning and structural review of the vast, critical cloud systems it enables. Industry should coordinate with CISA, the Office of the National Cyber Director (ONCD), and the NSA on long-term architectural reviews of the largest, most critical containerized systems in the form of biannual architecture review meetings to review case studies as well as best and worst industry practices. ONCD should be responsible for the organization and strategic vision for this process, executed through CISA in partnership with NSA. NIST should develop a publication series on long-term architectural practices based on these industry-wide fora within two years of their start, which several compliance and acquisition regimes could incorporate down the road.