Buying down risk: Memory safety

Computer code is ultimately a set of instructions for a machine to perform on simple numbers—storing them, using basic arithmetic, retrieving them, and deleting them, much like a calculator. Memory allocation is an important component of all operations ordered by a program. To carry out instructions, a program must allocate sufficient memory in the machine—at the simplest level, this means reserving enough storage to perform each task. Programming languages take different approaches to this allocation. A high-level language like Python or Java will handle allocation for the programmer automatically by finding, adding, and freeing memory as an item is created, grows, shrinks, or gets discarded.

Low-level languages—most commonly C and C++—require programmers to manage memory directly. These languages provide developers with commands to allocate, reallocate, and free up chunks of memory, as well as ways to reference specific locations in a machine’s memory, regardless of the content there. These languages are well suited to systems programming, giving instructions directly to the guts of a machine to produce programs with very fast performance. That freedom also creates risk, allowing a variety of bugs like buffer overflows, memory leaks, dangling pointers, etc. These issues, called memory-safety errors, can result from simple typos and forgotten lines of code or from complex memory structures and unforeseen interactions. Both Google and Microsoft found that about 70 percent of their discovered bugs stemmed from memory-safety issues. The two most-referenced memory-unsafe languages, C and C++, top some rankings of the most widely used coding languages and feature prominently on all others.

Several factors contribute to the popularity of these risky languages. The first is precedent: C was developed in 1972, and C++ in 1983 as a refinement of C that added several capabilities, including object-oriented programming. Modern operating systems were built on C, and many high-level programming languages and their compilers are also written in C. The languages are on the older side and comprise huge, hard-to-replace systems underpinning the digital world. Moreover, their lack of memory safety is as much a feature as a danger. C and C++ translate quickly from what the programmer writes to what the machine reads. Operating systems incorporate the C languages precisely because of their speed and direct access to memory for powerful design options, but this lack of guardrails allows equally for dangerous error.

Technical solutions

Memory safety presents a significant opportunity to stem the creation of new vulnerabilities and further limit the harm of existing ones with relatively singular solutions. Several protections for memory safety exist. For memory-unsafe languages like C and C++, there are tools to compile and run programs with dynamic memory checks. The open source suite Valgrind is one of the more common. However, the complexity of memory management causes Valgrind both to miss some types of error and to flag the practices that are actually safe, reducing precision.

In addition, some programming languages have been explicitly designed to make memory-safety issues impossible while also avoiding slower performance to allow systems programming. One of the most notable of these is Rust, developed by Mozilla, and other memory-safe languages with specific uses—Swift, C#, and F#—though not explicitly designed to supplant unsafe languages. Rust, built upon rules that programmers must abide by in order to compile their program, prevents memory-safety issues from existing at all. Rust also provides unsafe modes, allowing manual memory-management when explicitly required within subsections of code.

Adoption status

Many entities and corporations have honed in on memory safety as a critical contributor to ecosystem security, and many focus on the adoption and improvement of Rust as part of a solution, including Microsoft, Amazon Web Services (AWS), Facebook, and Google. Some projects include adding new components or rewriting old ones for Linux and the Android OS in Rust. Despite recent developments and Rust’s consistent user popularity, C and C++ remain ubiquitous, and adoption of Rust is somewhat slow. A few factors contribute to Rust’s lagging adoption and stem from the challenges of language transition. First, the Rust axioms that ensure memory safety also create a very steep learning curve. Although the language has ever-improving tooling, libraries, documentation, and contributors, these strict rules can require ground-up redesigns to implement common, familiar features. When existing Rust libraries are lacking, the requisite code-level redesigns cause some programmers to feel they are reinventing the wheel while still relying on many external libraries and dependencies, slowing development and build times. Finally, transition to any new language imposes massive upfront costs on enterprises between training or acquiring developers and integrating messy, resource-intensive rewrites of old, functional code with applications in other languages. In short, switching takes much time, effort, and money, and the transition inevitably stumbles along the way.

Recommendations

The following recommendations should ease, speed, and widen the adoption of memory-safe languages, reducing susceptibility to memory errors across widely used codebases. Although these actions closely fit Rust adoption, they can and should be applied to other memory-safe languages as best suited to specific implementations. Rust is not a cure-all, but it does provide an option for the particularly critical case of systems programming, where memory-safety issues are most prevalent, most ingrained, and most compromising. General industry support for Rust and other open source, memory-safe languages is crucial for their development and continued adoption.

- Make enterprise libraries open source and fill tooling needs: Industry groups and the Open Source Security Foundation (OpenSSF) should work with open source developers and maintainers to identify and remediate weaknesses in memory-safe development toolchains. Many large companies already use Rust. Open sourcing some proprietary Rust libraries and tools will provide developers with useful resources and increase the rate of language maturation. The private sector—starting with the Rust Foundation and its members, which include AWS, Google, and Microsoft—should identify gaps in Rust’s offerings and lead development there. Industry holds a unique position to valuably contribute to libraries that integrate Rust with other languages. The US government can speed these efforts by offering incentives for the development and sharing of these tools via direct spending from the Cybersecurity and Infrastructure Security Agency (CISA) and by incorporating and incentivizing memory-safe products in standard acquisitions and compliance processes (e.g., FedRAMP) enforced by the General Services Administration (GSA) and Department of Defense (DOD).

- Commit direct resources to ecosystem infrastructure and development: In addition to libraries and tooling, large companies should commit financial and development resources to the maturation of Rust. This work should involve both open source code, perhaps concentrated through an entity like OpenSSF, as well as proprietary code. With the creation of the Rust Foundation in February 2021 and membership from AWS, Google, Facebook, Huawei, Microsoft, and Mozilla, much of this work is under way, but the foundation should seek broader membership and deeper commitments, with as much intention of pulling entities into a memory-safe ecosystem as supporting the ecosystem itself. Large financial-sector entities like Bank of America and Capital One boast extraordinary cybersecurity budgets but face criticism for lackluster contributions to the cybersecurity of the digital ecosystem. Companies like IBM and Cisco with significant systems programming divisions, development capacity, and oftentimes extensive Rust experience should contribute to the development of the language and consider joining the Rust Foundation as well.

- Grow the workforce: Both in anticipation of increased labor demand for Rust developers and in order to emphasize the importance of memory safety throughout the computer science discipline, industry and government, through CISA, should encourage and even subsidize instruction in Rust and other relevant memory-safe languages at the undergraduate and graduate levels.

- Industry development standards for unsafe code: Developers have not yet fully leveraged and standardized Rust’s allowance of memory-unsafe code within specific blocks. The coding paradigm conveniently creates a natural development chokepoint for the review of unsafe components. Industry-standard review processes for unsafe Rust would increase ecosystem security and adoption, particularly given the necessity of unsafe code for multi-language integration and kernel and hardware programming. The Rust Foundation and OpenSSF should use the input of its members to collate and push out to all developers a set of best practices for using unsafe blocks. Other industry fora, such as SAFEcode, could provide broader input from non-Rust Foundation companies as well. Mature conventions for unsafe Rust and broader memory-safety practices could eventually enter cybersecurity maturity assessments published by the National Institute of Standards and Technology (NIST) and nongovernmental standards groups.

- Commit to new builds and rewrites of old ones: Linux’s planned adoption of Rust for some new components (and possibly rewrites of old ones) with Google’s support illustrates a viable integration path. Further enterprise commitments to integration can ease the adoption of memory-safe languages without the need for massive rewrites. Other prime candidates for gradual Rust integration include new components in Windows OS and Linux tooling (both already begun), Chrome and Safari codebases, and certain cryptographic or internet-critical memory-unsafe systems under active maintenance.

- Identify and rust critical software: The Critical Technology Security Centers (CTSCs) added to the House-passed COMPETES ACT (HR 4521) offer a vehicle to fund memory-safety improvements for critical software. The provisions would create at least four CTSCs for the security of network technologies, connected industrial control systems, open source software, and federal critical software. These CTSCs would work from the input of the Department of Homeland Security (DHS) Under Secretary of Science and Technology and the Director of CISA to study, test the security of, coordinate community funding for, and generally support CISA’s work regarding their respective technologies. CISA, in conjunction with Executive Order 14028’s focus on identifying and protecting critical software infrastructure in the supply chain, should work with the appropriate forthcoming CTSCs and private industry to identify critical, memory-unsafe software that should be rusted where possible and to allocate resources for the task. Such identification of critical, memory-unsafe code would be part of a broader effort to identify critical nodes of software dependence for both the federal government and the ecosystem at large, as part of a broader approach to ecosystem security through the CTSCs.

Other articles from the Buying Down Risk series

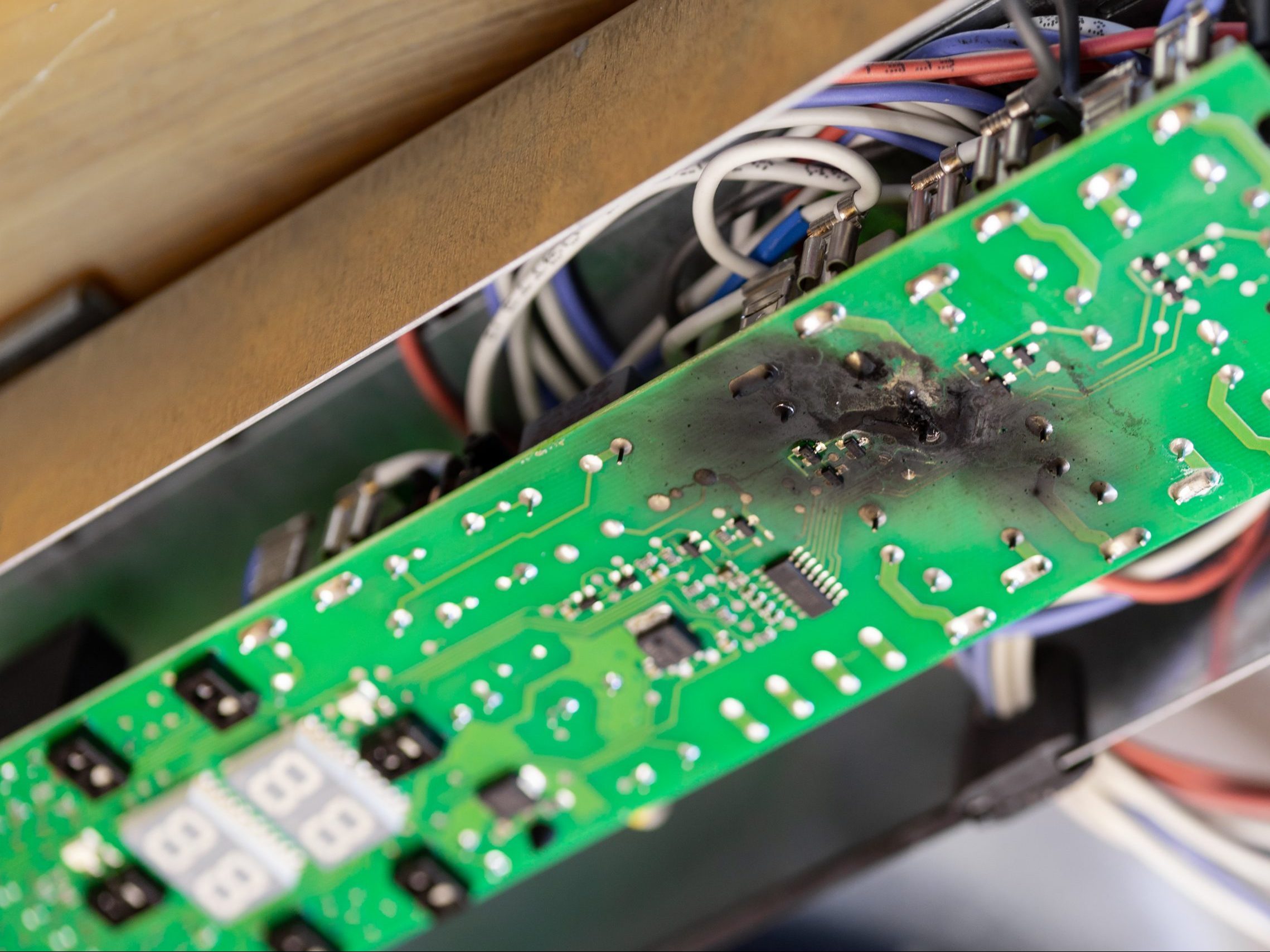

Image: Close up burnt green microchip after short circuit due to water damage. Damaged overheat control panel board of cooking stove.