How NATO can integrate AI to prevail in future algorithmic warfare

Bottom lines up front

- NATO’s competitive edge in the era of emerging and disruptive technologies will come from purposeful integration of AI technologies across the Alliance’s digital backbone.

- Military AI does not generate new vulnerabilities in kind, but it creates more room for human error and miscalculation.

- Victory in algorithmic warfare requires electromagnetic spectrum dominance.

Table of contents

- Executive summary

- Introduction

- Part one: Military AI tech trends

- Part two: The specter of algorithmic warfare

- Part three: Future scenarios

- Part four: Policy recommendations

- Conclusion

Executive summary

Military artificial intelligence (AI) is moving from the margins of experimentation into the core of how NATO will fight, make critical decisions, and deter competitors over the next decade. The 2022 NATO Strategic Concept identifies the technological edge to be critical for the Alliance to fulfil its core tasks. Both contemporary warfare and renewed strategic competition suggest that data-driven AI decision-support systems and autonomous battlefield capabilities augmented with AI will define the character of future conflicts. There is a justified focus on evaluating strategic risks associated with such systems.

This report argues that integrating AI into military systems does not generate vulnerabilities that are fundamentally new in kind compared to existing cyber risks. But the difference lies in consequences. Once AI-enabled decision-support systems and autonomous platforms become critical to Alliance operations, interference with data, models, and computing infrastructure may have implications for NATO’s ability to see, decide, and act under pressure. Similarly, the offensive use of AI-enabled capabilities does not, on its own, raise or lower the nuclear threshold. Escalation thresholds in algorithmic warfare will continue to be driven by effects on the ground rather than by whether a system is AI-enabled. Yet the characteristics of AI—the speed, system opacity, and physical infrastructure—create more room for human error, misperception, and miscalculation.

To explore such possibilities, the Atlantic Council’s Transatlantic Security Initiative, in partnership with the NATO Office of the Chief Scientist, conducted a foresight study to clarify how adversaries might counter AI-enabled capabilities and to examine what this means for NATO doctrine, strategy, and deterrence. The research combined horizon scanning and expert interviews, an off-the-record workshop held in Washington, under Chatham House rules, and scenario modeling. The project mapped AI technology trends across decision-support systems and autonomous platforms, identified likely AI vulnerabilities and vectors of attack, and explored escalation dynamics through structured discussion and scenario-based exercises.

This project brought a new perspective into the debate on the impacts of transformative military AI on future warfare for two reasons. First, it is innovative in its comprehensive scope that encompasses both physical and cyber dimensions of algorithmic warfare. Indeed, it foregrounds the AI triad of data, algorithms, and computing power and shows how each can be attacked through cyber, kinetic, and electromagnetic (EM) means. And second, it examines the intersection of AI and nuclear weapons from a different angle: Tailored nuclear weapons are treated as a potential countermeasure against military AI for their electromagnetic pulse (EMP) effects.

There are two key findings.

While military AI does not generate “shock and awe” in and of itself, AI can exacerbate existing risk conditions for accidents and inadvertent escalations.

The report finds that the employment of military AI does not make the use of tailored nuclear weapons more likely. Instead, the choice of target, physical damage, and casualties are what matter. Workshop participants ranked responses to a notional AI-enabled drone saturation attack in the Baltic region by their perceived escalatory potential. Diplomatic action and electronic warfare were the most preferred responses, followed by kinetic strikes, cyber operations, and directed-energy weapons (DEW). Tailored nuclear EMP attacks were viewed as highly escalatory and politically unacceptable for NATO to use to repel an attack over NATO territory, even when framed as a tool of “information warfare.”

At the same time, military AI is expected to make the difference in terms of increasing speed, autonomy, scale, and uncertainty. This research, however, revealed that in comparison with all three components of the AI triad, the human remains the most vulnerable element of AI. Humans are routinely exposed to phishing, social engineering, cognitive bias, and already run the risk of deskilling as more tasks are delegated to machines.

Integrating AI into military operations therefore creates dangers along two pathways. First, speed and data are working against their user. Such compressed timelines can create cognitive problems in decision-making. Without safety and quality protocols in place, flooding decision-support systems with noisy or nonpatternable data can further thicken the fog of war for commanders. Second, AI-enabled military systems become increasingly complex and can lead to normal accidents, making foreign interference detection and exposure difficult to distinguish from system failures.

Algorithmic warfare highlights the importance of electromagnetic spectrum dominance.

Digital modernization of defense—the data-centric approach and software-defined capabilities—will make electromagnetic threats more salient. Russia’s war in Ukraine already highlights how GPS jamming, communications blackouts, and electronic warfare shape combat operations. This trend will intensify as NATO begins to lean on AI-enabled and multidomain command and control.

Advances in military applications of AI further strengthen the convergence between the cyber domain of operations (digital code) and the electromagnetic environment (electrons). In a crowded and contested spectrum, where software-defined radios, commercial satellites, and cloud-linked data centers underpin military networks, the distinction between “cyber” and “conventional” attack begins to blur. Further fielding of directed-energy weapons also indicates shifting the center of gravity to energy supplies.

Attacks on AI systems can use several vectors. The adversary can target model weights through espionage and hacking; poison training datasets; blind or spoof sensors on intelligence, surveillance, and reconnaissance (ISR) platforms; disable data relays; or physically damage hardware in data centers, cables, satellites, or uncrewed systems. Cyber operators, electronic warfare units, special forces, and conventional reconnaissance-strike systems may all participate in degrading AI-enabled capabilities. In contrast, the ongoing trend of lowering the cost of warfare will make any requirements for new protection measures, such as shielding or hardening, difficult to implement due to the trade-offs in terms of cost, weight, and endurance.

The report develops three future scenarios, including a fourth baseline case, to identify likely implications of future algorithmic warfare for NATO’s doctrine and strategy: guarded opportunism, brave new world, and minority report.

- Guarded opportunism outlines a future in which military AI, despite its transformative impacts, does not require the military to dramatically alter the rules of engagement. Instead of introducing qualitatively new risks or vulnerabilities, the challenges related to military AI remain manageable with disciplined cyber hygiene and resilient power supply. On the risk side, this scenario points to heightened dangers of AI-fueled hybrid warfare below the threshold of armed conflict.

- Brave new world is a less likely but more dangerous scenario detailing the conditions for escalation spirals. Transformative effects of AI lead to conventionalizing nuclear weapons. Fielding of AI-enabled military capabilities provokes the adversary to use new nuclear-powered EM weapons. Nuclear EMP attacks are viewed as a legitimate use of nuclear weapons that belong to the specter of algorithmic warfare.

- Minority report presents a different take on the possible algorithmic future in which AI technology hype drives strategy. This scenario focuses on cognitive challenges for political and military decision-makers, who tend to overestimate near‑term benefits and discount the long-term risks and compound challenges of AI integration. Instead of improving AI operational implementation processes, countries race to achieve phantom AI advantages that destabilize the international security environment.

For NATO to leverage and maintain the advantage from transformative AI technologies, this report makes seven recommendations for NATO leaders that can contribute to NATO’s future strategy and doctrine adaptation.

- Master AI literacy. NATO needs to develop standards for continuous AI skill development for commanders, operators, and policymakers. AI literacy is not just a strategic competency but also an instrument of restraint.

- Engineer redundancy. Instead of creating a digital copy of all existing procedures, NATO should prioritize maintaining the ability to transmit information on rehearsed secondary systems.

- Coordinate approach to AI tech industry. NATO should develop a code of conduct for AI tech company engagements that addresses the formation of an exclusive suppliers’ group, the knowledge gap in the private sector, and the rules for civilian software engineers in war zones.

- Maintain information dominance. NATO should develop a functional framework for operationalizing AI in support of algorithmic warfare that prioritizes military objectives over abstract benchmarks and diversify its early warning systems.

- Clarify escalation thresholds. NATO should develop a shared understanding of escalation thresholds for algorithmic warfare, decide on response triggers, and predelegate command authority in time-compressed scenarios to avoid escalation risks and decision paralysis.

- Assess the electromagnetic layer with accuracy. Future algorithmic warfare will require NATO to treat electromagnetic spectrum operations as a distinct layer of multidomain operations to protect its strategic initiative and command-and-control superiority. NATO should also update its standards to reflect the changing scope of critical infrastructure as AI becomes a strategic asset to avoid underestimating the EM layer.

- Deter by ambiguity. NATO should project resilience while cloaking its sensitive AI assets in a black box unexplainable by adversaries. However, such deterrence by ambiguity should not erode internal accountability of NATO-run AI systems.

Introduction

The 2022 NATO Strategic Concept emphasizes the importance of the Alliance maintaining its technological edge to achieve mission success.1NATO, 2022, “NATO 2022 Strategic Concept,” June 29, https://www.nato.int/content/dam/nato/webready/documents/publications-and-reports/strategic-concepts/2022/290622-strategic-concept.pdf But NATO’s ability to ensure military effectiveness and uphold a credible deterrence and defense posture faces challenges in the era of emerging and disruptive technologies. In the context of rapidly evolving warfare tactics and renewed strategic competition, AI-powered decision-support systems (DSS) and autonomous battlefield capabilities are expected to shape future conflicts. NATO’s 2022 Digital Transformation Vision therefore intended to accelerate the adoption of data and AI analytics to unlock new advantages for the Alliance.2NATO, 2024, “NATO’S Digital Transformation Implementation Strategy,” October 17, https://www.nato.int/en/about-us/official-texts-and-resources/official-texts/2024/10/17/natos-digital-transformation-implementation-strategy.

Accordingly, NATO’s AI Strategy encourages strategic foresight activities to help allies achieve a reasonable level of AI readiness.3NATO, 2024, “NATO AI Strategy,” July 10, https://www.nato.int/cps/en/natohq/official_texts_227237.htm. It also focuses on anticipating new challenges and risks related to algorithmic warfare from adversarial use of AI. While the military potential of AI is versatile and uncertain, it has nonetheless become difficult to overlook its importance to strategic competition. Countries are racing to develop and deploy AI across their civilian economies and militaries. Russia, the most significant and direct threat to NATO allies, and the People’s Republic of China, a strategic competitor seeking to control key technologies, have widely communicated their intentions to field AI for military purposes.4Stephen Herzog and Dominika Kunertova, 2024, “NATO and Emerging Technologies—The Alliance’s Shifting Approach to Military Innovation,” Naval War College Review 77, no. 2: 47–69.

Research objective

The Atlantic Council’s Transatlantic Security Initiative, in partnership with the NATO Office of the Chief Scientist, has conducted a foresight study addressing this crucial topic. This effort seeks to gain more clarity on AI’s transformative military effects over the next decade. This report assesses the vulnerabilities entailed in AI integration into NATO military capabilities in the context of the digital transformation of defense and the growing importance of electromagnetic spectrum operations. Importantly, it identifies ways in which adversaries might counter future AI-enabled capabilities on and off the battlefield. The objective is thus to understand how these developments may affect NATO’s doctrine and strategy moving forward.

This report’s focus on the transformative effects of military AI is highly relevant given NATO’s ambition to conduct multidomain operations.5Andrea Gilli, Mauro Gilli, and Gorana Grgić, 2025, “NATO, Multi-Domain Operations and the Future of the Atlantic Alliance,” Comparative Strategy 44, no. 1: 73–91, doi:10.1080/01495933.2024.2445491. As outlined in the Digital Transformation Implementation Strategy, NATO political and military leaders intend to use advanced analytics in combination with multimodal data from sensor networks for a consolidated multidomain situational awareness in real time.6NATO, NATO’s Digital Transformation Implementation Strategy, last updated February 20, 2025, https://www.nato.int/cps/en/natohq/official_texts_229801.htm. While the “digital backbone” is intended to enable command and control across all domains, a broader digital interoperability framework with a secured data-sharing ecosystem will enhance political consultation and decision-making processes.

This report therefore seeks to address the complex question of the likely implications of future military AI countermeasures on NATO’s doctrine and strategy. This means identifying the risks from integrating transformative AI into military systems, examining the vulnerabilities the adoption of AI will create, assessing the severity and probability of corresponding adversarial attacks, and formulating recommendations. Importantly, to limit the dangers of technological determinism, this project examined how political and military leaders and policy planners (at the state level of decision-making) perceive new technologies appearing on the battlefield and craft their responses to escalate or not.7Toni Erskine and Steven E. Miller, 2024, “AI and the Decision to Go to War: Future Risks and Opportunities,” Australian Journal of International Affairs 78, no. 2: 135–47, doi:10.1080/10357718.2024.2349598.

Methodology

In terms of methodology, this report used several data collection and analysis tools. The first phase of the project consisted of horizon scanning and road mapping. Through a structured evidence-gathering process based on desk research of relevant open-source documents and background expert interviews, this report identified the most important drivers of change, as well as the likely future developments at the intersection of AI and the defense sector that are at the margins of current thinking and planning.

In the second phase, the Atlantic Council hosted an off-the-record closed workshop held on an unclassified level in Washington. Through two prescripted discussions, conducted under Chatham House rules, policy and scholarly experts were asked to stress test the assumptions from the first phase. This informed the project on the likelihood of AI countermeasures and conditions for escalation in future algorithmic warfare, as well as to validate recommendations.

The third and last phase of the project centered on future scenario development. This is a useful policy analysis tool that visualizes a set of possible future conditions to help NATO decision-makers to anticipate challenges as they define capability requirements for NATO’s success in future algorithmic warfare.

Structure

This report proceeds as follows. Part One maps AI technology trends and their military applications over the next decade, from the battlefield to the war room. Part Two then proceeds to anticipate the vulnerabilities of AI-enabled systems and to assess the possible vectors of attack to explore escalation pathways in algorithmic warfare; it covers both digital and physical dimensions across the so-called “AI triad” of algorithms, data, and computing power—and adds a human factor.

Part Three outlines three algorithmic futures—guarded opportunism, brave new world, and minority report—based on the likely transformative effects of military AI and their impact on international security. This line of scientific inquiry is highly relevant given ongoing research concerned with the impact of roboticized autonomous systems operating with minimal human supervision on future conflicts.8Catherine Caruso, 2024, “The Risks of Artificial Intelligence in Weapons Design,” Harvard Medical School, August 7, https://hms.harvard.edu/news/risks-artificial-intelligence-weapons-design; and Michael C. Horowitz, 2016, “The Ethics & Morality of Robotic Warfare: Assessing the Debate over Autonomous Weapons,” Daedalus 145, no. 4: 25–36, doi: https://doi.org/10.1162/DAED_a_00409.

Part Four discusses recommendations for NATO leaders. Based on the project’s findings, this report raises seven main action points that are categorized into three areas: AI readiness and resilience; military AI doctrine; and deterrence.

Part one: Military AI tech trends

AI is becoming a general-purpose military technology that will sit inside almost every digital system that NATO uses.9Michael C. Horowitz, 2018, “Artificial Intelligence, International Competition, and the Balance of Power,” Texas National Security Review 1, no. 3: 36–57, https://doi.org/10.15781/T2639KP49. Its transformative effects will likely concentrate in two areas. First, decision-support systems will expand the scale of information analytics military commanders can process to make better decisions fast. Second, autonomous and semiautonomous platforms will shift how militaries sense, move, and strike on the battlefield. Together, these developments are driving an AI era of algorithmic warfare.10Benjamin M. Jensen, Christopher Whyte, and Scott Cuomo, 2022, Information in War: Military Innovation, Battle Networks, and the Future of Artificial Intelligence (Georgetown University Press, 2022), https://doi.org/10.2307/j.ctv2k88t2w.

AI can, in principle, be implemented in everything that uses a computer. As defense establishments digitize, AI has never been a single-purpose capability in itself. Rather, AI architecture underpins modern command, control, communications, intelligence, surveillance, reconnaissance, logistics, and weapons systems. NATO’s own definitions reflect this evolution. In 1995, NATO described AI as the capability of a functional unit to perform tasks generally associated with human intelligence, such as reasoning and learning. By 2005, it was also seen as “the branch of computer science” focused on building systems that reason, learn, and improve themselves.11NATO Standardization Office, 2025, “Artificial Intelligence,” Official NATO Terminology Database, NATOTerm, accessed May 31, 2025, https://nso.nato.int/natoterm/Web.mvc These definitions now apply across a much broader digital ecosystem. Software has become a defining component of many weapon systems and AI is increasingly embedded in sensors, networks, and command-and-control tools.

The overall expectations about AI’s impact on future warfare can be captured in three concepts: speed, scale, and autonomy. Speed refers to faster sensing, processing, and engagement cycles. Scale refers to the ability to handle vast volumes of data and to coordinate large numbers of distributed assets, including swarms of UASs. Autonomy refers to the degree to which AI systems can operate with minimal human supervision. NATO’s challenge will be to harness these three dimensions without sacrificing control, accountability, or interoperability.

From general-purpose enabler to algorithmic warfare

The military applications of AI span relatively low-stakes use cases such as administrative automation and training, operational functions like logistics and cybersecurity, and high-stakes roles in targeting, electronic warfare, and human-machine teaming in combat.12Jeppe Teglskov Jacobsen, 2025, “Military AI: American Experiences, Danish Opportunities [Militær AI: Amerikanske erfaringer, danske muligheder],” Center for Military Studies, April 30, https://cms.polsci.ku.dk/publikationer/militaer-ai/ From a functional standpoint, experts in defense and military affairs expect AI to matter depending on the AI model type, broadly divided in four categories: generative AI, classification, prediction, and autonomy.13Jacob Stokes et al., 2025, “Averting AI Armageddon: U.S.-China-Russia Rivalry at the Nexus of Nuclear Weapons and Artificial Intelligence,” Center for a New American Security, February 13, https://www.cnas.org/publications/reports/averting-ai-armageddon. This includes tasks in which large volumes of data must be processed quickly, where patterns are too complex for human perception, where actions need to follow real-time operational intelligence fast, and where simulated environments can meet high training requirements.

Generative AI: Content, coaching, and cognitive effects

Generative AI models create novel content that mimics the statistical properties of the data on which they are trained in response to human prompts. In the military context, these systems are likely to be used as “agents” or virtual advisers that support commanders and staff in alleviating their daily administrative burdens and automating less critical processes, such as drafting routine reports, summarizing long documents, and translating technical information.14US Department of Defense, 2024, “Task Force Lima,” Chief Digital and Artificial Intelligence Officer, https://www.ai.mil/Portals/137/Documents/Resources%20Page/2024-12-TF%20Lima-ExecSum-TAB-A.pdf. In training and simulation, generative AI models can serve as simulation tools in war games and exercises. They populate synthetic environments with plausible adversarial actors and behaviors. This role improves scenario realism and generates alternative courses of action.

At the same time, these features can also be weaponized for offensive information operations. Adversaries can use generative AI to run large-scale, low-cost disinformation campaigns. This may involve producing tailored propaganda or impersonating Alliance leaders, journalists, and civil society voices. Generative AI will therefore be a powerful tool in the hands of adversaries seeking to manipulate perceptions and erode NATO’s cohesion.15Claudia Wallner with Simon Copeland and Antonio Giustozzi, 2025, “Russia, AI and the Future of Disinformation Warfare”, Royal United Services Institute (RUSI), June, https://static.rusi.org/russia-ai-and-the-future-of-disinformation-warfare.pdf.

Classification: Noise and signal in a sensor-saturated battlespace

Classification models excel at recognizing patterns in labeled data and assigning new inputs to categories they have learned. Militaries already use such models for computer vision, facial and object recognition, and behavior detection. Computer vision models can identify vehicles, aircraft, ships, and infrastructure in imagery from satellites, aircraft, and UASs against their regularly updated data libraries. Classification tools can become crucial for early warning systems, from detecting stealthy cyber intrusions to flagging irregular troop movements. In sum, over the next decade, these systems are well-suited to sit at the core of intelligence, surveillance, reconnaissance, and targeting architectures.

Much is expected from AI-enabled electronic warfare too. In a battlespace saturated with sensors, classification tools can automate filtering of the electromagnetic spectrum, such as distinguishing signal from noise and highlighting anomalous signals that warrant human attention. Furthermore, signal processing algorithms can suggest waveforms to counter hostile signals and thus help overcome adversarial jamming in real time.16John Keller, 2024, “Navy Approaches Industry for Electronic Warfare (EW), RF Surveillance, and Artificial intelligence (AI),” Military and Aerospace Electronics, August 21, https://www.militaryaerospace.com/sensors/article/55134498/electronic-warfare-ew-rf-surveillance-artificial-intelligence-ai. As the electromagnetic spectrum becomes more contested, the ability to recognize and respond to subtle patterns faster than an adversary will be a critical advantage.

Prediction and data fusion: Scaling decision support

Prediction models analyze historical and real-time data to identify trends and forecast likely future events. In military settings, they underpin decision-support systems (DSS) designed to help commanders cope with complexity and information overload. The transition to multidomain operations underscores the importance of such multimodal data fusion and analytics.17Felix Govaers, 2023, “Novel Concepts for Sensor Data Fusion in Multi Domain Operations,” Sensing Technology Panel, NATO Science and Security Organization, July 27, https://www.sto.nato.int/document/novel-concepts-for-sensor-data-fusion-in-multi-domain-operations/.

This type of AI model is therefore suitable to support battle management, as they fuse information from multiple sources and data streams from land, air, maritime, cyber, and space assets, and integrate them into a single operating picture that is updatable in real time.18NATO Communications and Information Agency, 2024, “Ukraine Showcases Battlefield Technology at NATO Edge 24”, News, December 10, https://www.ncia.nato.int/newsroom/news/ukraine-showcases-battlefield-technology-at-nato-edge-24. However, this also means that such data-centric decision-making processes can narrow commanders’ perceptions and constrain their choices.19Emelia Probasco et al., “AI for Military Decision-Making: Harnessing the Advantages and Avoiding the Risks,” Center for Security and Emerging Technology, April 2025, https://doi.org/10.51593/20240028.

They can also highlight early warning indicators, propose likely adversary courses of action, and flag emerging risks in logistics and supply chains. In logistics, in particular, AI can support predictive maintenance of critical stockpiles; forecast demand for ammunition, fuel, and spare parts; and anticipate bottlenecks in transportation networks.20Beth Reece, 2025, “AI to Boost Efficiency, Optimize Logistics Support as DLA Standardizes Use of New Tech,” Defense Logistics Agency, May 17, https://www.dla.mil/About-DLA/News/News-Article-View/Article/4122004/ai-to-boost-efficiency-optimize-logistics-support-as-dla-standardizes-use-of-ne/. Predictive systems can also assist with medical support by estimating casualties and optimizing the positioning of medical resources.21Ryan M. Leone et al., 2024, “Artificial Intelligence in Military Medicine,” Military Medicine 189, no. 9-10: 244–248, https://doi.org/10.1093/milmed/usae359.

Autonomy: From perception to action

Autonomy involves AI systems that perceive their environment, process real-time data from sensors, and make decisions in pursuit of a mission objective without constant human intervention. In this case, AI models can cause kinetic effects, as they can direct hardware and/or software to react within the physical realm based on the input from the immediate environment.

Onboard AI enables uncrewed aircraft, ground vehicles, and maritime platforms to filter and fuse sensor inputs, navigate in contested environments, and pass the most relevant information back to human controllers. Advances in machine vision, for example, allow drones to compare real-time imagery from downward-facing cameras with stored satellite images and inertial data to determine their position without reliance on global navigation satellite systems. This is particularly important in GPS-denied or heavily jammed environments.22Dominika Kunertova, 2025, “Embracing Drone Diversity: Five Challenges to Western Military Adaptation in Drone Warfare,” Freeman Air & Space Institute Paper 29, King’s College London.

Autonomy is also extending to terminal guidance and target recognition. Today, many drones operate on autopilot for parts of their mission, with humans in- or on-the-loop for final engagement decisions. Over time, fully autonomous solutions that combine visual navigation, target recognition, and terminal guidance are likely to proliferate. Seamless data flows, however, are crucial. The Ukrainian forces use a practice that resembles “Uber targeting,” where one unit identifies a target, shares the observation on an encrypted network, and the targeting assignment goes to whichever unit is available, even facilitating joint-strike capability from multiple vectors.23Mark Bruno, 2022, “‘Uber for Artillery’–What is Ukraine’s GIS Arta System?,” Molochproject, August 24, https://themoloch.com/conflict/uber-for-artillery-what-is-ukraines-gis-arta-system/. AI-enabled systems that can collect, process, and act on information in real time will make such dynamic targeting more common, especially when communications with higher headquarters are degraded.

From incremental adoption to algorithmic warfare

Together, developments in these functional areas point toward the algorithmic future of warfare. Broadly speaking, algorithmic warfare refers to integrating automated, autonomous, and AI technologies into the conduct of war, while decreasing the role of human elements.24Ingvild Bode et al., 2023, “Algorithmic Warfare: Taking Stock of a Research Programme,” Global Society 38, no. 1: 1–23, doi:10.1080/13600826.2023.2263473. In algorithmic warfare, the military conducts operations through AI-enabled capabilities that collect, analyze, and act on data at speeds and scales beyond human capacity. Artificially intelligent means operate when human warfighters cannot and reduce their exposure to danger. Such AI-enabled autonomous capabilities will especially be assigned tasks at the edge of the battlespace to handle time-critical sensing and response functions without human supervision and with minimum guidance.25Courtney Crosby, 2020, “Operationalizing Artificial Intelligence for Algorithmic Warfare,” Military Review, July–August: 43–51, https://www.armyupress.army.mil/Portals/7/military-review/Archives/English/JA-20/Crosby-Operationalizing-AI-1.pdf.

Yet the most transformative effects of military AI are likely to appear in two use cases. First, AI in DSS will expand the scale and speed of information processing, giving commanders a richer but more mediated view of the operating environment. Decision-support tools will not only help humans make better-informed choices but also shape the decision space by highlighting some options and obscuring others. Second, AI embedded in weapons platforms will use speed and autonomy to compress the kill chain, shrinking the time between detection, identification, decision, and engagement.26Kenneth Payne, 2018, “Artificial Intelligence: A Revolution in Strategic Affairs?,” Survival 60, no. 5: 7–32, doi:10.1080/00396338.2018.1518374. This has implications for escalation control, the rules of engagement (ROE), and the role of commanders in supervising rapid, machine-driven engagements.

Drivers of change

Several structural drivers are signaling greater reliance on AI and algorithmic approaches to warfare. These drivers are particularly important for NATO as it implements its Digital Transformation Vision and prepares for multidomain operations.

Digital modernization of defense

First, the broader digital modernization of defense is creating the conditions in which AI can thrive. Modern militaries are upgrading their IT infrastructure and moving to software-defined capabilities that deliver new functionality to existing platforms.27Simona R. Soare, Pavneet Singh, and Meia Nouwens, 2023, “Software-defined Defence: Algorithms at War,” International Institute for Strategic Studies, February, https://www.iiss.org/research-paper/2023/02/software-defined-defence. This also means adopting data-centric approaches to capability development through collaborative digital spaces.

As militaries continue implementing digital modernization of their forces, their dominance in the electromagnetic spectrum is crucial for their new dependencies on sensors, satellites, and networked systems. Russia’s war in Ukraine has underscored the importance of EM warfare, including GPS jamming and communications blackouts.28Kateryna Stepanenko, 2025, “The Battlefield AI Revolution Is Not Here Yet: The Status of Russian and Ukrainian AI Drone Efforts,” Institute for the Study of War, Special Report, June 2, https://understandingwar.org/wp-content/uploads/2025/06/The20Battlefield20AI20Revolution20Is20Not20Here20Yet20The20Status20of20Current20Russian20and20Ukrainian20AI20Drone20Efforts20PDF.pdf. These developments push militaries to design more resilient, autonomous, and decentralized command-and-control structures with better cybersecurity measures. At the same time, electromagnetic warfare in the West has not gotten the attention it needs and is still seen as largely subservient to or stovepiped from cyber.29Clara Le Gargasson and James Black, 2025, “Electromagnetic Warfare: NATO’s Blind Spot Could Decide the Next Conflict,” RAND Commentary, November 24, https://www.rand.org/pubs/commentary/2025/11/electromagnetic-warfare-natos-blind-spot-could-decide.html; and Justin Bronk, 2025, “Airborne Electromagnetic Warfare in NATO: A Critical European Capability Gap,” RUSI Occasional Paper, https://static.rusi.org/airborne-electronic-warfare-in-nato_0.pdf.

Interconnected domains

Second, the move toward multidomain operations (MDO) requires integrating effects across land, air, maritime, space, and cyberspace, as well as in the virtual and cognitive dimensions. MDO aims to “[orchestrate] military activities, synchronize non-military instruments of power, and deliver converging effects at the speed of relevance.”30NATO Allied Command Transformation, 2022, “Multi-Domain Operations: Enabling NATO to Out-Pace and Out-Think its Adversaries,” July 29, https://www.act.nato.int/article/multi-domain-operations-enabling-nato-to-out-pace-and-out-think-its-adversaries/. To make this possible, allies are building a digital backbone that can enable command and control across all domains. However, the effectiveness of this backbone depends on interoperable data sharing, secure and reliable communications, and advanced analytics capable of fusing data into a real-time consolidated multidomain picture. Turning well-integrated AI models into C4ISR systems that enhance situational awareness and support decision-making becomes part of the key conditions for conducting multidomain operations.

Autonomy pursuits

Third, recent and ongoing conflicts are accelerating experimentation with AI-enabled autonomous and decision-support systems. In Ukraine, AI-driven platforms already analyze extensive sensor and signal data to generate real-time targeting suggestions and logistical predictions.31Haley Britzky and Isabelle Khurshudyan, 2025, “US Drone Dilemma: Why the Most Advanced Military in the World Is Playing Catchup on the Modern Battlefield,” CNN, September 15, https://www.cnn.com/2025/09/15/politics/drone-us-military-russia-ukraine. In Gaza, reports indicate that machine-learning systems such as “Gospel” and “Lavender” have been used to support dynamic targeting and terminal navigation by combining multi-source imagery with other intelligence inputs.32Anthony Downey, 2025, “The Alibi of AI: Algorithmic Models of Automated Killing,” Digital Wars 6, no. 9, https://doi.org/10.1057/s42984-025-00105-7. These cases illustrate a shift from isolated, weapon-centric AI applications toward more comprehensive systems that inform planning, targeting, and force deployment at all command levels.

Drones are no longer only agents of remote warfare but are fast becoming agents of algorithmic warfare as well. Demand has surged for battlefield drone footage. Thousands of drone-camera videos depicting successful strikes are used to train computer-vision models, while engineers race to design uncrewed systems that can navigate and coordinate in GPS- and communications-denied environments using on-board processing and limited power.

Two motivations stand out: building “mass for precision” and supplementing shrinking human force structures. Swarm tactics and swarm command seek to saturate defenses and compress reaction times through the coordinated use of large numbers of low-cost platforms. At the same time, demographic trends and recruitment challenges will incentivize greater robotic integration and human-machine teaming. Forward-deployed, uninhabited platforms on standby will increasingly redefine how militaries think about force projection and readiness.33NATO Science and Technology Organization, 2025, Science and Technology Trends 2025-2045, vol. 1, https://sto-trends.com/. For instance, large drone formations can provide the aggressor with an edge in the invasion of foreign territory, highlighting the challenge to the capacity of air defenses.34Hong-Lun Tiunn et al., 2025, “Drones for Democracy: U.S.-Taiwan Cooperation in Building a Resilient and China-Free UAV Supply Chain,” Research Institute for Democracy, Society and Emerging Technology, June 16, https://dset.tw/wp-content/uploads/2025/06/Drones-for-Democracy-U.S.-Taiwan-Cooperation-in-Building-a-Resilient-and-China-Free-UAV-Supply-Chain-1.pdf. Across these trends, AI is fast becoming more than just a technological tool; it is a vital strategic competency,35Lena Trabucco and Esben Salling Larsen, 2025, “Artificial Intelligence in Command and Control,” Center for Military Studies, October 10, https://cms.polsci.ku.dk/english/publications/artificial-intelligence-in-command-and-control/. and will likely determine which militaries can exploit AI—at scale and under stress. For NATO, understanding where AI is most likely to transform operations, and how adversaries might target the vulnerabilities of AI-enabled systems, is a prerequisite for credible deterrence and effective defense in the emerging era of algorithmic warfare.

Part two: The specter of algorithmic warfare

Militaries have not yet realized the full potential of AI technologies, but it is not difficult to see how AI will shape the strategic environment and wartime paradigms. As the AI race intensifies, potent AI-enabled capabilities will be deployed as part of NATO’s digital transformation and decision-support ambitions.36NATO Allied Command Transformation, 2023, “Joint Force Development Experimentation & Wargaming Branch Fact Sheet – Human Considerations in Artificial Intelligence for Command and Control: Augmented Near Real-Time Instrument for Critical Information Processing and Evaluation (ANTICIPE),” ACT Fact Sheet; and SHAPE Public Affairs Office, 2025, “NATO Acquires AI-enabled Warfighting System,” News Release, April 14, https://shape.nato.int/news-releases/nato-acquires-aienabled-warfighting-system-. This section translates interview insights and workshop discussions into a structured analysis of AI’s core components and their vulnerabilities and the likely vectors of adversarial attack. Two case studies used in the workshop—AI applications in autonomous weapons platforms and in a decision-support system—further informed the analysis of the limits of main AI countermeasures and the conditions under which escalation in algorithmic warfare may occur. This is because the likelihood of an adversary attacking NATO for using AI models for predictive maintenance is comparatively low.

AI triad

Military AI rests on three interlocking components often described as the AI triad: data, algorithms, and computing power.37Ben Buchanan, 2020, “The AI Triad and What It Means for National Security Strategy,” Center for Security and Emerging Technology, https://cset.georgetown.edu/wp-content/uploads/CSET-AI-Triad-Report.pdf. Each component has a specific implication for offense-defense parameters. For instance, algorithms imply attacks on model architecture, computing power involves disrupting semiconductors and supply chains, while data concern cyberattacks to poison datasets.

Data refers to information about the focus area of the machine-learning system, collected from sensors and other sources, organized, stored, and made accessible. Algorithms are the series of instructions used to process information; machine-learning algorithms derive insights from datasets and the learnable parameters that encode the core capabilities of an AI model in model weights. Computing power provides the speed and capacity to execute algorithms at scale, train models to determine weights, and run inference offline on deployed systems.38Ben Buchanan, 2020, “The U.S. Has AI Competition All Wrong,” Foreign Affairs, August 7, https://www.foreignaffairs.com/articles/united-states/2020-08-07/us-has-ai-competition-all-wrong. In practice, computing power includes processors and graphics cards, advanced semiconductors, content delivery networks, power supplies, and cooling. Defense applications often need to run offline on edge devices under strict size, weight, and power constraints, or on government cloud resources with limited GPU availability. Data, sometimes dubbed the new “munition” due to their importance for modern warfare, encompasses issues such as volume, quality, salience, and labeling. The amount of training data strongly influences effectiveness, though collecting the right operational data and labeling it correctly are important for accuracy and alignment. Algorithms feed data into model weights through training, and their resulting internal architecture determines future data analysis in real-time operations.

AI vulnerabilities and vectors of attack

Integrating AI introduces several challenges along the entire triad. Core datasets are massive, models can be opaque, and natural-language prompting expands input surfaces. These characteristics create multiple entry points for adversaries and raise the importance of disciplined processes and safeguards. Adversaries will attempt to degrade NATO’s AI-enabled capabilities by targeting the triad across cyber, electromagnetic, and conventional kinetic dimensions. This section outlines how such attacks would prevent the Alliance from enjoying advantages from AI.

Computing power

Vulnerabilities associated with computing power reflect the physicality of AI infrastructure. This is because advanced semiconductors and specialized chips must be sourced, supplied, and integrated into systems that also require stable energy and cooling. The performance of inference-heavy applications may depend on AI-optimized hardware. These dependencies create risks during material shortages, expose weak points in data centers, and constrain performance at the tactical edge.

Adversaries can exploit material attributes of semiconductors. They can disrupt the supply of specialized AI chips, seed vendor-supplied Trojan backdoors, or manipulate cloud architectures built with commercial technology. They can target the electricity supply of data centers and sabotage their water-cooling systems to cause outages, or damage undersea cables and content-delivery networks to disrupt data flows.

Data

Data is vulnerable across the lifecycle of AI models. Adversaries can poison training datasets through cyber operations that mislabel data or introduce hidden triggers that cause the model to misbehave. Poorly labeled or biased datasets degrade performance, making certain classes of objects invisible to the system or misclassifying them at critical ranges. If the wrong data is collected, or if the right data is corrupted, the entire decision-support chain can lead a model to malfunction and reduce its reliability in the long term.

Adversaries can also interfere with real-life data collection. Because drones and other autonomous systems rely on environmental input, adversaries can tamper with surroundings to impact sensory input and cause abnormal behavior. For instance, blinding sensors on ISR platforms with optical illusions, or adjusting the sensors themselves, and generating spoofing signals can mislead the model into inappropriate responses.39Takami Sato et al., 2025, “On the Realism of LiDAR Spoofing Attacks against Autonomous Driving Vehicle at High Speed and Long Distance,” Network and Distributed System Security (NDSS) Symposium 2025, February 24–28, San Diego, California, https://dx.doi.org/10.14722/ndss.2025.230628. In addition to onboard perception and planning modules, adversaries can target control interfaces, power management, data relays, and user interfaces used to coordinate connected autonomous systems. Alternatively, disabling low-orbit satellites can also stop real-time input and data sharing.

Algorithms

Incorporating AI into the digital architecture makes the existing systems susceptible to attacks that target the AI model itself. Because model parameters encode internal configuration variables crucial for its operation, compromising weights and biases gives an attacker significant leverage. Adversaries can also try to steal model weights through espionage or proxy hackers, gaining access to the core capabilities of the model for manipulation.40Sella Nevo et al., 2024, Securing AI Model Weights: Preventing Theft and Misuse of Frontier Models, RAND, May 30, https://www.rand.org/pubs/research_reports/RRA2849-1.html.

Adversaries can thicken the fog of war for algorithms by flooding AI-enabled DSS with inputs that are inaccurate, uncategorizable, or nonpatternable. They can exploit the rare and unpredictable features of the battlefield, since AI models are mostly trained on either synthetic data or on datasets from previous conflicts that may not quite fit the type and circumstances of the current war zone.

Interviewed experts and workshop participants indicated that the most likely adversarial action against military AI architecture would include:

- Blinding sensors on ISR platforms to stop the real-time input of new data.

- Spreading misinformation to confuse the algorithms with nonpatternable data.

- Physically damaging undersea cables to disrupt data sharing.

- Conducting espionage in the suppliers’ private lab facilities.

Surprisingly, however, the most vulnerable component of AI seems to be the human; data and algorithms follow, with the computing power being the least vulnerable of AI components. Such human-related vulnerabilities include personalized phishing, social engineering, cognitive bias, and deskilling.

Countering military AI

Having discussed the vectors of adversarial attacks on AI-enabled military systems and capabilities, this section now briefly comments on the means of such attacks. These AI countermeasures include cyber operations, conventional kinetic attack, electronic warfare, directed energy weapons (DEW), and tailored nuclear weapons with enhanced electromagnetic pulse (EMP). Each has distinct advantages and limitations.

Cyber operations

Cyber operations can interfere with how AI models learn and operate by manipulating ones and zeros. Cyberattacks can degrade the model’s performance or integrity, limit its availability by delaying responses or rendering command-and-control systems inoperative at crucial moments.41Elena Sokova, 2020, “Disruptive Technologies and Nuclear Weapons,” New Perspectives 28, no. 3, 292–297, https://doi.org/10.1177/2336825X20934975. Integrating AI into military systems increases their vulnerability simply by creating more targets for computer hacking.42Pavel Sharikov, 2018, “Artificial Intelligence, Cyberattack, and Nuclear Weapons—A Dangerous Combination,” Bulletin of the Atomic Scientists 74, no. 6: 368–73, doi:10.1080/00963402.2018.1533185. These AI vulnerabilities include compromising software libraries, poisoning training data, hijacking AI infrastructure, or stealing sensitive AI properties. Such cyberattacks, however, require prior intelligence to target the right datasets and processing centers. Their effects can be difficult to assess and attribute in real time, which increases the potential for miscalculation.

Conventional kinetic action

Conventional kinetic attacks can target ISR assets including space-based systems, airborne warning and control system aircraft, and other hardware components integral in critical AI infrastructure. Traditional air defenses can target offensive AI onboard small autonomous vehicles with low-cost interceptors, nets, and guns. Kinetic action is tangible but can be escalatory depending on target and context, and it may be expensive or resource-intensive if used at scale against saturation attacks.

Electronic warfare

Electronic warfare uses electromagnetic energy to degrade hostile systems by jamming or spoofing. EW can produce reversible, nonlethal effects, but it is constrained by range, power, antennas, and by the need for detailed knowledge of enemy waveforms and code. Focused jamming and signal spoofing in case of multisensor platforms can confuse AI into analytical errors and lead to wrong reactions. Jamming, however, is possible only in the case of collaborative autonomous platforms that communicate among themselves the adaptive course of their action.

Directed-energy weapons

High-power microwaves and high-energy lasers widen the range of electromagnetic spectrum operations (EMSO). They can disable or destroy electronics on autonomous platforms using concentrated electromagnetic energy.43Kelley M. Sayler et al., 2024, “Department of Defense Directed Energy Weapons: Background and Issues for Congress,” Congressional Research Service, July 11, https://crsreports.congress.gov R46925. While microwaves are suitable for area defenses and perimeter denial against swarms of drones, lasers with their energy beams perform point defense similar to short-range air defense and counter-rocket, artillery, and mortar missions. They have low logistics tails and low cost per shot, but they are power hungry and range-limited. Atmospheric conditions, such as rain and fog, can reduce beam quality and effectiveness, as well as increase fratricide risks. Their applications for space missions look promising given their reusability and the potential to degrade or destroy a satellite.44Zhanna L. Malekos Smith, 2024, “The Specter of EMP Weapons in Space,” Carnegie Council for Ethics in International Affairs, March 27, https://www.carnegiecouncil.org/media/article/the-specter-of-emp-weapons-in-space.

Tailored nuclear weapons with enhanced electromagnetic pulse

Nuclear explosions of all types—from underground to high altitudes—are accompanied by an electromagnetic pulse. The strength and area coverage of this intense time varying electromagnetic radiation depends on the warhead type and yield, and the altitude of the detonation.45US Department of Energy, 2017, US Department of Energy Electromagnetic Pulse Resilience Action Plan, https://www.energy.gov/sites/prod/files/2017/01/f34/DOE%20EMP%20Resilience%20Action%20Plan%20January%202017.pdf#:~:text=Although%20an%20EMP%20is%20also%20generated%20by,EMP%20events%20produced%20by%20high%20altitude%20detonations. This means that while high-altitude airbursts can have a continent-wide deposition region, for explosions in the atmosphere at altitudes below 30 kilometers, the radius ranges from 5 to 16 kilometers.46Samuel Glasstone and Philip J. Dolan, 1977, “The Electromagnetic Pulse and Its Effects,” in The Effects of Nuclear Weapons, Glasstone and Dolan, eds., US Department of Defense and the Energy Research and Development Administration, 514–531, Digitized and published by Chris Griffith and Eric A. Meyer, 2022, https://atomicarchive.com/resources/documents/effects/glasstone-dolan/chapter11.html.

Since the 1960s, EMPs, either man-made or natural, have been known to have a potential to disrupt, damage, or destroy a wide array of electrical and electronic systems.47Roger Allen Meade, 2022, “Operation Fishbowl,” Los Alamos National Laboratory, National Nuclear Security Administration, US Department of Energy, October 25, https://www.osti.gov/servlets/purl/1896391. Degradation of electrical and electronic system performance as a result of exposure to the EMP may cause either permanent functional damage or a temporary operational impairment, lasting from seconds to hours.48Glasstone and Dolan, “The Electromagnetic Pulse and Its Effects.” Computers used in data processing systems, communications systems, and semiconductors belong to the category of devices most susceptible to failure.49Washington State Department of Health, 2003, “Electromagnetic Pulse (EMP),” Fact Sheet 320-090, Division of Environmental Health, Office of Radiation Protection, https://doh.wa.gov/sites/default/files/legacy/Documents/Pubs/320-090_elecpuls_fs.pdf.

While airbursts have little or no fallout and no residual radiation, it is difficult to predict their effects and impact on today’s sensitive electronics, as well as avoid collateral damage and civilian casualties. Together with the difficulty to signal limited nuclear use, since the adversary cannot distinguish low-yield from high-yield weapons, such employment of nuclear EMP weapons remains highly problematic and inherently escalatory.50Richard Wolfson and Ferenc Dalnoki-Veress, 2021, Nuclear Choices for the Twenty-First Century: A Citizen’s Guide, MIT Press. Experimental exercises over the past decades have identified no assurance that a nuclear strike would remain limited.51Lawrence Freedman and Jeffrey H. Michaels, 2019, The Evolution of Nuclear Strategy, 4th ed. (London: Palgrave Macmillan).

Escalation and algorithmic warfare

The workshop assessed the salience of AI-enabled lethal operations along an escalatory pathway from minor cyber operations to DEW and nuclear EMP. The following paragraphs summarize the expert participants’ discussion on the conditions under which the use of military AI could increase the risk of escalation.

Escalation is defined as “an increase in the intensity or scope of conflict that crosses threshold(s) considered significant by one or more of the participants.”52Forrest E. Morgan et al., 2008, Dangerous Thresholds: Managing Escalation in the 21st Century (Santa Monica, California: RAND Corporation), 8. Escalation thresholds then depend on retaliation in response to some form of attack. The workshop discussion highlighted the distinction between effects-based and means-based escalation logics. While effects-based logic identifies thresholds depending on the impact that is irrespective of the weapons type, means-based logic emphasizes the qualitative difference between nuclear, conventional, and cyber domains. Some means are regarded as less escalatory than others. For instance, cyberattacks have proven capable of restraining the escalation dynamic and even de-escalating geopolitical crises.53Sarah Kreps and Jacquelyn Schneider, 2019, “Escalation Firebreaks in the Cyber, Conventional, and Nuclear Domains: Moving beyond Effects-based Logics,” Journal of Cybersecurity 5, no. 1: 1–11, tyz007, https://doi.org/10.1093/cybsec/tyz007. Similarly, attacks on large drones are less likely to lead to escalation than attacks on inhabited aircraft.54Erik Lin-Greenberg, 2022, “Wargame of Drones: Remotely Piloted Aircraft and Crisis Escalation,” Journal of Conflict Resolution 66, no. 10: 1737–1765, https://doi.org/10.1177/00220027221106960.

Most researchers studying the AI-nuclear intersection focus on AI amplifying existing risks in nuclear command, control, and communications that can spark accidental nuclear confrontation,55James Johnson, 2021, “‘Catalytic Nuclear War’ in the Age of Artificial Intelligence & Autonomy: Emerging Military Technology and Escalation Risk between Nuclear-Armed States,” Journal of Strategic Studies, 1–41, https://doi.org/10.1080/01402390.2020.1867541. undermining deterrence with AI-enabled conventional systems,56Steve Fetter and Jaganath Sankaran, 2024, “Emerging Technologies and Challenges to Nuclear Stability,” Journal of Strategic Studies 48, no. 2: 252–96, doi:10.1080/01402390.2024.2433766; Vladislav Chernavskikh and Jules Palayer, 2025, “Impact of Military Artificial Intelligence on Nuclear Escalation Risk,” Stockholm International Peace Research Institute (SIPRI), June, https://doi.org/10.55163/FZIW8544; and Jacob Stokes, 2025, Averting AI Armageddon: US–China–Russia Rivalry at the Nexus of Nuclear Weapons and Artificial Intelligence, Center for a New American Security, https://www.cnas.org/publications/reports/averting-ai-armageddon. incentivizing first strike,57Michael C. Horowitz, 2019, “When Speed Kills: Lethal Autonomous Weapon Systems, Deterrence and Stability,” Journal of Strategic Studies 42, no. 6, 764–788. https://doi.org/10.1080/01402390.2019.1621174. or exacerbating the proliferation/verification dilemma.58David M. Allison and Stephen Herzog, 2025, “Artificial Intelligence and Nuclear Weapons Proliferation: The Technological Arms Race for (In)visibility,” Risk Analysis 45, no. 11: 3839–3859, https://doi.org/10.1111/risa.70105. This workshop addressed the concern of a possible deliberate use of nuclear weapons as a warfighting tool designed to produce electromagnetic pulse effects to counter military AI. Previous experimental war-gaming showed that although low-yield nuclear weapons do indeed destabilize international security since they are seen as a substitute for high-yield nuclear use, they do not seem to increase the likelihood of crossing the nuclear threshold.59Andrew W. Reddie and Bethany Goldblum, 2022, “Evidence of the Unthinkable: Experimental Wargaming at the Nuclear Threshold,” Journal of Peace Research 60, no. 5: 760–776, https://doi.org/10.1177/00223433221094734.

The workshop scenario described an AI-enabled fast and lethal drone saturation attack into the Baltic region. The scenario listed a number of possible responses:

- Diplomatic action.

- Economic sanctions.

- Cyberattack.

- Conventional kinetic response.

- Electronic warfare measures.

- Directed energy weapons.

- Tailored nuclear weapons with enhanced electromagnetic pulse.

The workshop participants ranked responses by their perceived escalatory potential. Diplomatic action and electronic warfare tended to come first and often in parallel. Kinetic action, cyber operations, and DEW followed as second-ring responses. Economic sanctions were seen as medium-term tools, not immediate response levers. Tailored nuclear EMP was considered least probable but most escalatory, with a consensus that its use over NATO territory would be unacceptable. Among the most prevalent concerns against the nuclear EMP use, the participants noted: lowering the threshold for strategic nuclear weapon use; observing the nuclear “taboo,” the response’s proportionality, proliferation of nuclear weapons following nuclear use, and setting a negative precedent.

The follow-on discussion highlighted that adversaries may exploit AI structural risks. Complex AI systems can make attribution and intent assessment harder as AI and autonomy create conditions for plausible deniability. In addition, increased speed and data volumes can work against the user, since time-pressured scenarios increase the risk that decision-makers may rely more heavily on potentially compromised AI outputs, without even understanding the source of unanticipated inputs or system failures.60Matthijs M. Maas, 2019, “How Viable Is International Arms Control for Military Artificial Intelligence? Three Lessons from Nuclear Weapons,” Contemporary Security Policy 40, no. 3: 285–311.

The workshop confirmed that military AI is not escalatory because offensive AI-enabled capabilities do not meaningfully increase the nature or intensity of a conflict. What matters is the choice of target, the physical damage, and the presence of casualties. At the same time, the properties of AI—speed, autonomy, and opacity—can increase the risk of inadvertent escalation. Despite the fight for EM spectrum dominance, the AI status of an attack does not lower nuclear thresholds—effects on the ground determine response. Ultimately, the vicinity of the adversary’s troops continues to be perceived as more escalatory than an AI-powered swarm attack.

Part three: Future scenarios

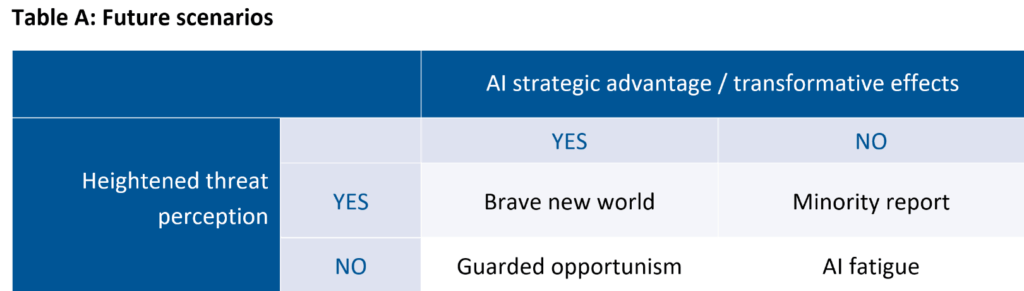

Juxtaposing the possible transformative effects of military AI against the threat perception (table A), this foresight study outlines three military AI future scenarios: Guarded opportunism, brave new world, and minority report. The goal is to anticipate long‑haul innovation in countering adversarial attacks on NATO’s AI systems and to inform military research and development decisions.61Alexander Kott, and Philip Perconti, 2025, “How Accurate Is Forecasting of Military Technologies?,” NATO Defense College, Hindsight Series Paper no. 6, https://www.ndc.nato.int/how-accurate-is-forecasting-of-military-technologies/.

The scenarios are modeled after two variables with a graduated level of likelihood. The first variable concerns the transformative impact of AI: whether countries achieve any strategic advantage from integrating AI into their militaries. And the second variable addresses an adversary’s threat perception: whether integrating AI provokes the development of new countermeasures and/or changes on the escalation ladder.

The fourth quadrant—AI fatigue—represents the most unlikely scenario with no decisive AI advantage and no heightened threat perception. It is less policy‑salient but remains useful as a control for future policy planning.

Scenario I. Guarded opportunism

This is the most plausible future scenario. AI meaningfully transforms military affairs and confers comparative advantage on states that integrate it well, yet it does not worsen adversary threat perceptions. Business continues largely as usual. AI‑enabled decision support and autonomy systems transform the character of warfare through expanded scale and increased operational speed yet without changing the nature of war.

NATO’s digital transformation and integrated AI-enabled military capabilities do not introduce qualitatively new risks or vulnerabilities. These remain familiar to cyberspace and can be managed with disciplined cyber hygiene and resilient power-supply architectures. However, AI may heighten some of the existing threat pathways and security risks. As AI becomes integral to the ability to operate and respond, degraded situational awareness and power outages, for instance, could become more consequential—and a new center of gravity—in digitalized, software-defined defense. Decision‑support systems help commanders filter the noise and frame choices faster, but they do not demand new categories of resilience beyond what Part Two already identified for the AI triad.

Hybrid pressure intensifies below the threshold of armed conflict. Cable cuts, data center intrusions, and information operations become routine. Russia continues sabotaging critical AI infrastructure to disrupt supply chains and cyber and drone intimidation campaigns across Europe.62Charlie Edwards and Nate Seidenstein, 2025, “The Scale of Russian Sabotage Operations against Europe’s Critical Infrastructure,” International Institute for Strategic Studies, August 19, https://www.iiss.org/research-paper/2025/08/the-scale-of-russian–sabotage-operations–against-europes-critical–infrastructure/. Yet technology knowledge and investments into resilient computer systems limit these escalation attempts. Better engineering and AI literacy shorten detection and attribution loops and make recovery faster.

Two challenges stand out. The first is the intergovernmental character of the Alliance. NATO relies on its member countries for certain types of cyber operations. This dependence on capitals to act creates latency in time‑sensitive crises and may result in inefficient responses that may not prevent further escalation of hybrid warfare. The second is information warfare targeting the Alliance’s reputation. NATO publics in left‑leaning governments are targeted with disinformation campaigns that frame AI‑enabled capabilities as unethical “killer robots,” arguing that NATO violates its own principles of responsible use of AI. Adversaries are further fueling domestic opposition to reduce tech-sector cooperation.

Still, guarded opportunism is defined by low escalation risks. Algorithmic warfare remains bounded by existing ROE and proportional responses. The only time AI and nuclear fields cross their paths with real-world consequences is in the widespread adoption of small nuclear reactors across the military to power demanding computations of AI models.

Scenario II. Brave new world

In the second scenario, AI is transformative and threat perception worsens. The AI triad delivers a real strategic and operational edge. However, AI-related risks grow with it over time due to insufficient literacy, lack of regular training, lagging skill development, and sloppy implementation of zero‑trust policy across armed forces. Furthermore, rapid and widespread integration of AI models creates new vulnerabilities, stemming from limited human agency, which complicate the cognitive aspects of decision-making.63Avi Goldfarb and Jon R. Lindsay, 2022, “Prediction and Judgment: Why Artificial Intelligence Increases the Importance of Humans in War,” International Security 46, no. 3: 7–50, doi: https://doi.org/10.1162/isec_a_00425. The result is an increased probability of flash wars among autonomous robotic systems, in which algorithms interact at such a fast pace that humans would not be involved.64Paul Scharre, 2018, Army of None: Autonomous Weapons and the Future of War, 1st ed. (New York, NY: W. W. Norton & Company).

Such a degraded security environment sees multiple escalation spirals. Compressed decision-making times and fully autonomous systems contribute to perceptions of asymmetric disadvantage between Russia and NATO. Russia’s doctrine and force structure amplify the problem. Russia’s revision of its nuclear doctrine in 2024—with its greater emphasis on “aerospace attacks,” explicitly including drones, as one of the conditions under which nuclear weapons may be used—seems to lower the threshold for nuclear use.65Russian Federation Ministry of Foreign Affairs, 2024, “Fundamentals of State Policy of the Russian Federation on Nuclear Deterrence,” per Russian Presidential Order no. 991, https://www.mid.ru/en/foreign_policy/international_safety/1434131/. This demonstrates that Russia became more reliant on its nonstrategic nuclear weapons after its conventional forces degraded in the war on Ukraine.66Liviu Horovitz and Lydia Wachs, 2024, “Russian Nuclear Weapons in Belarus? Motivations and Consequences,” Washington Quarterly 47, no. 3, 103–29, doi:10.1080/0163660X.2024.2398952. This seems to strengthen the Russian leadership’s belief that nonstrategic nuclear weapons are Russia’s “competitive advantage” over NATO.67Jacek Durkalec, 2025, “Counterforce at the Regional Level of War: A European Perspective,” in Counterforce in Contemporary U.S. Nuclear Strategy, ed. Brad Roberts, Center for Global Security Research, Lawrence Livermore National Laboratory, 152–167. Furthermore, Russia’s vision of new generation warfare builds upon weapons based on new physical principles, including radio frequency, laser, infrasonic, and electromagnetic. Russia has indeed been developing a precision-strike system built on integration of EW, uncrewed strike and reconnaissance systems, hypersonic weapons, and low-yield nuclear warheads.

In contrast, as NATO’s deterrent power derives from advanced conventional capabilities, this scenario portrays a deeper blurring of conventional and nuclear domains.68Wilfred Wan and Gitte du Plessis, 2025, “Blurring Conventional–Nuclear Boundaries: Nordic Developments, Global Implications,” SIPRI, https://www.sipri.org/commentary/essay/2025/blurring-conventional-nuclear-boundaries-nordic-developments-global-implications. Yet NATO struggles to attain superiority in strategic command and control, while avoiding dependencies on commercial clouds and satellites. Large‑scale outages and cascading failures are more frequent. Allies hold regular war-gaming exercises to make sure that the Alliance’s responses remain proportionate even when attacks are AI‑generated. Yet Russia’s asymmetric countermeasures to the multidomain concept keep causing electronic damage to NATO command posts and communications centers.69Katarzyna Zysk, 2023, “Struggling, Not Crumbling: Russian Defence: AI in a Time of War,” RUSI, Commentary, November 20, https://rusi.org/explore-our-research/publications/commentary/struggling-not-crumbling-russian-defence-ai-time-war.

In high tension, states embrace capabilities that manipulate the spectrum—microwaves, lasers, tailored EMP—seeking to blunt swarms and blind sensors. While EW once seemed unbeatable, jamming lost its teeth against uncrewed vehicles that do not use communication and navigation links. And if autonomy was an antidote to EW, then degrading the electromagnetic environment has become the antidote to AI-enabled military capabilities.

Some governments resume nuclear explosive testing of airburst effects, which contributes to further entangling AI with the nuclear domain. The line between conventional and nuclear war will get more fragile with the proliferation of new classes of EMP weapons. Nuclear proliferation gets out of control as more countries strive to develop their own low-yield nuclear EMP deterrent to counter AI-enabled adversaries. Worse, numerous experts inside and outside Russia believe that a nuclear EMP attack does not need to be governed by the same set of considerations as strategic nuclear weapons and nuclear doctrine.70Peter Vincent Pry, 2017, “Foreign Views of Electromagnetic Pulse Attack,” Report to the Commission to Assess the Threat to the United States from Electromagnetic Pulse (EMP) Attack, http://www.firstempcommission.org/uploads/1/1/9/5/119571849/foreign_views_of_emp_attack_by_peter_pry_july_2017.pdf. Nuclear EMP weapons are understood within the category of electronic warfare or information warfare, not nuclear warfare. In this increasingly popular interpretation, an EMP attack is regarded as a legitimate use of nuclear weapons within the specter of algorithmic warfare. Even if nuclear EMP is conceptualized as a form of information warfare in some circles, its use would be profoundly escalatory.

Scenario III. Minority report

In the third scenario, technology hype drives strategy. AI does not deliver decisive comparative advantage for the military, yet threat perceptions grow worse. Exaggerated expectations about the game-changing, transformative, and inevitable impact of AI fuel anxiety about falling behind. The fear of missing out, rather than tangible advantages from AI models, pushes countries deep into the AI race. Such alarmism about phantom AI advantages has a destabilizing effect on strategic balance.

Information asymmetries deepen the problem. NATO militaries and Russian officials tout milestones and “breakthroughs,” while major AI firms speak of revolutionary models. The strategic conversation fixates on what might be developed tomorrow rather than what is fielded today. Decision‑makers overestimate near‑term effects and discount the risks and challenges of AI integration work highlighted in Parts One and Two. As a result, nuclear-armed great powers interpret routine military exercises as cover for preemptive strikes at machine‑speeds and tend to see AI-enabled ISR improvements as a direct threat to their second-strike capabilities.

Escalation pathways in this scenario are cognitive. On the one hand, leaders race to push fully autonomous prototypes forward before safety case evaluations are completed. Miscalculation risk rises not because AI-enabled autonomous weapons systems are unstoppable, but because the decision-makers believe they are. On the other, the adversaries deploy cognitive warfare tactics of “algorithmic amplification” to influence how decision-makers reason, degrade critical decision-making processes, and undermine their sense of security.71Frank Hoffman, 2025, “Assessing ‘Cognitive Warfare,’ ” Small Wars Journal, November 14, https://smallwarsjournal.com/2025/11/14/assessing-cognitive-warfare/#_ednref3.

The Alliance faces the challenge of lowering expectations while preserving its technological edge. However, while allies agreed to coordinate their political objectives of developing AI-enabled armed forces, the lack of national resources and ineffectiveness of their national AI strategies to achieve them weakened NATO’s cohesion.72Dominika Kunertova and Olivier Schmitt, 2024, “Assessing NATO’s Cohesion: Methods and Implications,” International Politics 62: 1097–1110, https://doi.org/10.1057/s41311-024-00641-1. Leading AI countries are reluctant to institutionalize transparent metrics for AI readiness that separate laboratory promise from operational proof.

This scenario points to the need to move beyond the polarizing hopes-vs-fears dichotomy of AI in order to translate technological potential into military advantage through a sound implementation strategy.73Andreas Graae, 2023, Servers before Tanks? Defence AI in Denmark, Defense AI Observatory, DAIO Study 23|18, https://defenseai.eu/wp-content/uploads/2023/11/daio_study2318_servers_before_tanks_andreas_graae.pdf. This scenario reminds policymakers and defense planners to budget for the cognitive dimension of technological competition. Publics and markets react to hyped narratives faster than to scientific results. Adversaries will try to exploit this gap with rhetoric about their AI leapfrogging, announcing the winner of the AI race.

Across all three futures, NATO faces distinct challenges posed by future algorithmic warfare. NATO’s advantage from AI models rests on speed, scale, and autonomy delivered by a resilient AI triad under close human oversight. Guarded opportunism is the most likely scenario and highlights AI vulnerabilities in the light of hybrid and information warfare. Brave new world is less likely but the more dangerous of the three futures. In this algorithmic future, NATO is constantly on the cusp of spirals of escalation and de-escalation and points to the dangers from rapid and widespread integration of AI models without correspondingly fast doctrinal adaptation. Minority report, meanwhile, outlines the destabilizing effects of AI hype in the context of lacking safety and transparency standards.

Part four: Policy recommendations

NATO’s advantage in algorithmic warfare will depend on converting AI’s speed, scale, and autonomy into reliable military capabilities while avoiding inadvertent escalation. This report suggests that the Alliance should focus on three lines of effort. First, it must build AI readiness and resilience across the Alliance. Second, it must refine military AI doctrine to preserve information dominance and to clarify response triggers under compressed timelines. Third, it must develop a deterrence strategy for its strategic AI-enabled DSS. These policy recommendations address the AI vulnerabilities and attack vectors identified in the report’s earlier sections, providing practical steps for NATO leaders implementing the Digital Transformation Vision and preparing for multidomain operations. Each recommendation is intended for near‑term adoption to set conditions for long‑term advantages from AI.

I. AI readiness and resilience

NATO should anchor its AI strategy in two core principles—literacy and redundancy—and reinforce those principles through a coordinated approach to the AI tech industry. Such an approach will help NATO avoid the risks of stale knowledge and deskilling.

Recommendation 1: Master AI literacy

AI literacy should be treated as a strategic competency for commanders, operators, and policymakers rather than as a niche topic confined to chief information officers. NATO should integrate AI education into professional military education, operational exercises, and staff development programs so that leaders understand both the promise and the limits of current AI models. AI-literate armed forces are less likely to succumb to tech-centric thinking and automation bias in future strategy and doctrine development.

NATO should also educate wider publics and political elites so that strategy debates do not become hostage to hype. Clear explanations of how models are evaluated, how data shape military performance, and how human judgment remains central are key for preparing policymakers at all levels to make informed AI-related decisions.74Sophia Hatz et al., 2025, “Local US Officials’ Views on the Impacts and Governance of AI: Evidence from 2022 and 2023 Survey Waves,” PLOS ONE 20, no. 10, https://doi.org/10.1371/journal.pone.0332919.

Recommendation 2: Engineer redundancy

Maintaining the ability to transmit information is essential for coordinated actions. NATO should assume that outages and system failures will occur. The Alliance needs to exercise capabilities in communications‑degraded electromagnetic environments and design robust and rehearsed secondary systems. This involves mapping cyber and physical dependencies to avoid single points of failure.